If your production workloads rely on AWS Middle East (Central), the recent region-wide outage exposes the real-world risks of region-dependent cloud architectures—especially in areas with complex geopolitical environments. Although online speculation suggested conflict as a cause, there is no official confirmation from AWS or recognized independent sources about the outage’s root cause. What is confirmed: the AWS Health Dashboard reported significant service disruptions in the region on March 1, 2026, but did not detail which services were impacted or for how long. This post breaks down what’s known, how to verify and respond when regional outages occur, and the steps you should take to build more resilient AWS deployments.

Key Takeaways:

- The AWS Middle East (Central) region experienced a significant outage on March 1, 2026, with multiple services disrupted. AWS has not confirmed the root cause or the specific services affected.

- Practitioners must rely on official sources like the AWS Health Dashboard for incident confirmation and refrain from acting on unverified online rumors.

- Resilient cloud architectures—incorporating cross-region replication, disaster recovery, and regular failover testing—are critical, especially in regions with elevated geopolitical and infrastructure risk.

- It is essential to review incident response procedures and validate monitoring coverage using both AWS tools and third-party platforms.

AWS Middle East (Central) Outage: What We Know

On March 1, 2026, the AWS Health Dashboard reported significant disruptions in the Middle East (Central) region. The official dashboard confirms that multiple AWS services experienced interruptions, but it does not specify which services (for example, EC2, S3, Lambda, or RDS) were impacted, nor does it indicate the precise duration of the event. No technical postmortem or root cause analysis has been published by AWS or independent agencies to date.

Online discussions—including a Hacker News thread—referenced the possibility of conflict as a cause, but these claims remain unsubstantiated. There is no evidence from AWS or recognized industry monitoring sources supporting such attribution. According to Cloudflare Radar Insights, the region has seen a growing number of internet disruptions due to government actions, infrastructure failures, and weather-driven events, but there is no direct link between these trends and this specific AWS incident.

- AWS Health Dashboard confirms disruptions in the Middle East (Central) region on March 1, 2026.

- No official information is available regarding the exact cause, duration, or specific services affected.

- Rumors about war or conflict as the root cause are not supported by AWS or independent monitoring organizations.

The industry trend of rising regional internet and cloud disruptions is real, but practitioners should focus on actionable evidence and always prioritize official sources for incident response.

Impact Assessment and Service Verification

When a regional AWS outage occurs, your first job is to determine whether and how your workloads are affected. The AWS Health Dashboard provides region-level status, but does not always break down service-by-service or account-specific impact. Here’s how to proceed:

- Check the AWS Health Dashboard for real-time updates and region status. Confirm if your region is listed as degraded or unavailable.

- Sign in to the AWS Management Console. Use the region selector to switch to Middle East (Central) and attempt to access your critical resources. Be aware that the exact UI elements and service health visibility may vary depending on your permissions and the AWS Console version.

- Use third-party monitoring solutions—such as geographically-distributed uptime probes—to validate whether endpoints are reachable from outside AWS. This step can help identify user-facing issues that AWS’s own dashboards may not surface during a major regional incident.

- Correlate AWS dashboard findings with your own metrics, logs, and internal incident channels for a full picture of affected business processes.

Example: High-Level AWS Console Verification Workflow

# 1. Go to https://aws.amazon.com/console/

# 2. Sign in with your AWS account

# 3. Select 'Middle East (Central)' from the region dropdown

# 4. Attempt to access resources (EC2, S3, etc.) as needed

# Note: The AWS Console interface and available widgets (including service health) may change. Always refer to the official documentation for UI navigation: https://aws.amazon.com/console/

Do not rely solely on the AWS Console for confirmation, as console access itself can be degraded during a regional outage. Pair AWS dashboard checks with external monitoring for a more accurate assessment of real-world impact.

Operational Response and Failover Patterns

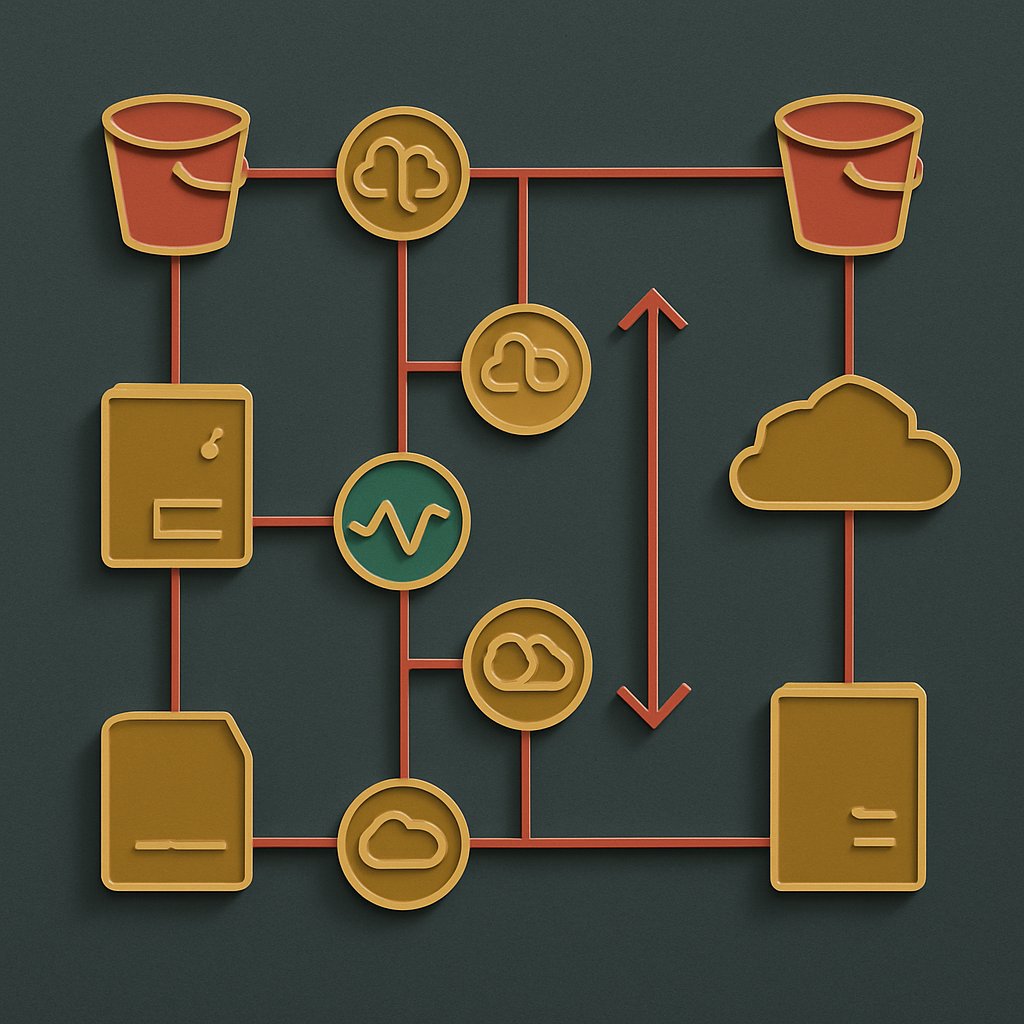

Region-wide outages test your disaster recovery (DR), business continuity, and multi-region deployment strategies. The best-prepared organizations use automation to rapidly fail over workloads or data to other regions. Here’s how practitioners implement these patterns on AWS:

Multi-Region DR Patterns

- Active-Active: Run production workloads in two or more AWS regions, distributing traffic via global DNS (such as Route 53 with health checks). If one region fails, the other(s) absorb the load with minimal user disruption.

- Active-Passive: Maintain a “pilot light” infrastructure in a secondary region. When the primary region fails, scale up and redirect traffic to the backup.

- Backup and Restore: Use cross-region replication for data (such as S3 buckets) and regular infrastructure snapshots, enabling you to restore critical assets even if the secondary region is not running live workloads.

Example: S3 Cross-Region Replication Policy (structure per AWS documentation)

{

"ReplicationConfiguration": {

"Role": "arn:aws:iam::account-id:role/replication-role",

"Rules": [

{

"Status": "Enabled",

"Prefix": "",

"Destination": {

"Bucket": "arn:aws:s3:::destination-bucket"

}

}

]

}

}

# This policy enables automated object replication from a source S3 bucket in Middle East (Central) to a destination bucket in another region.

Example: Route 53 Health Check Configuration (structure per AWS documentation)

{

"CallerReference": "unique-string",

"HealthCheckConfig": {

"IPAddress": "203.0.113.1",

"Port": 80,

"Type": "HTTP",

"ResourcePath": "/health",

"RequestInterval": 30,

"FailureThreshold": 3

}

}

# Attach this health check to a Route 53 DNS failover record. If health checks fail, Route 53 routes traffic away from the affected region.

Operational Best Practices

- Automate failover and recovery using infrastructure-as-code tools (such as CloudFormation or Terraform).

- Conduct live-fire DR drills in addition to tabletop exercises; document lessons learned and update procedures after each exercise.

- Monitor both internal AWS metrics and external user-facing performance from multiple global locations.

- Audit all dependencies—including CI/CD, monitoring, and logging pipelines—to ensure they aren’t single-region points of failure.

Organizations with well-tested, automated DR and failover procedures recover faster and with less business impact than those relying on manual intervention or single-region architectures.

Considerations, Trade-offs, and Alternatives

Major cloud outages underscore the trade-offs of region and provider concentration. According to official AWS Management Console documentation and industry experience, here are key aspects to weigh:

Key Limitations and Challenges

- Regional Concentration Risk: Operating in a single region exposes workloads to technical and non-technical disruptions. Multi-region resilience comes with added cost and complexity.

- Technical Complexity: Multi-region designs require advanced skills and careful coordination—especially for data consistency, security, and integration.

- Data Residency and Compliance: Regulations may limit where data can be replicated, restricting some DR strategies.

- Cost Considerations: Multi-region and multi-cloud approaches can significantly increase infrastructure, operations, and personnel costs.

- Support Load: During wide-scale incidents, access to AWS support may be delayed due to high ticket volume.

| Provider | Strengths | Limitations |

|---|---|---|

| AWS | Extensive global coverage, broad service catalog, mature partner network | Regional concentration risk, operational complexity for DR, premium pricing |

| Oracle Cloud | Strong in regulated sectors, competitive pricing | Fewer regions, smaller ecosystem |

| Alibaba Cloud | Large Asia-Pacific presence, aggressive pricing | Limited outside APAC, regulatory constraints |

Provider and architecture choices should be based on your business context and resilience requirements. For deeper automation and risk management strategies, see Harnessing the Power of Decision Trees in Automation.

Common Pitfalls and Pro Tips

- Unverified Assumptions: Don’t make crisis decisions based on unofficial reports or rumors. Always consult the AWS Health Dashboard and official communications for incident response.

- Failover Not Tested: Disaster recovery plans often fail due to lack of live testing. Schedule and document regular DR drills.

- Compliance Risks: Review all applicable regulations before replicating or moving data across regions or providers.

- Monitoring Gaps: Internal AWS metrics may not capture all user-facing issues. Deploy third-party probes from outside the AWS network.

- Dependency Blind Spots: Outages can cascade if CI/CD, alerting, or monitoring pipelines are region-constrained. Audit these dependencies for resilience.

For more on automation that reduces operational risk, review decision tree automation strategies and see how tooling impacts incident response in our Ghostty terminal review.

Conclusion and Next Steps

The AWS Middle East (Central) outage highlights the real operational risks of single-region cloud deployments—especially in regions with heightened geopolitical or infrastructure risk. With no official root cause or service-level breakdown published, you must focus on actionable resilience: validate your disaster recovery plans, implement cross-region replication for critical data, and rely on authoritative sources like the AWS Health Dashboard for incident updates.

Continuously improve your automation, monitoring, and failover strategies. For more on resilient automation, read Harnessing the Power of Decision Trees in Automation. Stay updated with our Ghostty terminal review and minimal GPT implementation analysis for the latest production tooling insights.

Monitor service health, validate your architecture, and ensure your incident response is rooted in verified, up-to-date information to keep your cloud strategy robust and adaptive.