Fault-Tolerant Computer Systems in Spacecraft: Lessons for Developers

Why Artemis II’s fault-tolerant computer matters right now

Artemis II launched on April 1, 2026, putting humans on a trajectory around the Moon for the first time since 1972. That date matters because it’s not just a milestone for spaceflight—it’s a forcing function for a kind of engineering discipline the software world has been drifting away from: designing systems that keep working when components fail.

NASA’s own public mission updates and coverage around Artemis II emphasize continuous spacecraft operations, autonomous sequences, and the reality that deep-space systems must run reliably in high-radiation conditions where human intervention is limited. A widely circulated write-up, “How NASA Built Artemis II’s Fault-Tolerant Computer” (Communications of the ACM), frames the key challenge plainly: the onboard computer is designed to withstand radiation and hardware failures while keeping mission-critical operations functioning.

For developers with 1–5 years of experience, Artemis II is a reminder that “fault tolerance” isn’t a buzzword. It’s an architecture choice that shows up in concrete mechanisms: redundancy, error detection, autonomous response, and real-time monitoring—concepts also highlighted in industry coverage of Artemis II’s automation systems, including radiation detectors and rad-hardened electronics used to keep spacecraft systems operating under extreme conditions (Automation News, April 4, 2026).

And yes—this has market implications. The same design instincts behind deep-space fault tolerance (determinism, redundancy, observability, conservative change management) are increasingly relevant again as AI-driven coding, faster release cycles, and “move fast” culture collide with safety-critical domains (aerospace, automotive, medical, energy). Artemis II is a high-profile counterexample: reliability is the product.

Key Takeaways:

- Artemis II is a live demonstration of “keep operating despite faults” engineering under deep-space constraints.

- The core ideas—redundancy, cross-checking, health monitoring, autonomous response—map directly to production software patterns.

- Developers can borrow these patterns today for services that must survive bad deploys, partial outages, and data corruption.

What “fault-tolerant computer” means on a crewed deep-space mission

In web services, faults often mean: a node dies, a region blips, a dependency times out. In deep space, faults include those plus an extra category that’s brutal for digital systems: radiation-induced errors. Automation-focused reporting on Artemis II explicitly calls out high-radiation conditions and the use of radiation detectors that continuously measure cosmic rays and solar particle events, with data processed in real time for protective measures.

So when NASA talks about a “fault-tolerant computer system” for Artemis II (as summarized in the widely syndicated coverage of the CACM piece), it’s not just about having backups. It’s about building a computing system that can:

- Detect that something is wrong (bad data, stuck task, unexpected state, corrupted memory, sensor anomaly).

- Isolate the fault so it doesn’t cascade (containment boundaries, redundancy lanes, safe modes).

- Recover without waiting on the ground (autonomous fallback logic, reconfiguration, switching to redundant components).

- Continue mission-critical functions even if non-critical subsystems degrade.

That same “keep the critical path alive” thinking is how you should design production systems that handle money, identity, safety, or compliance. The difference is that NASA’s failure budget is basically zero when humans are onboard.

From Apollo’s 1 MHz computer to Artemis II’s redundancy-first design

The CACM coverage uses a striking comparison to anchor just how far spacecraft computing has come: Apollo astronauts navigated using a computer with a 1 MHz processor and about 4 kilobytes of erasable memory, plus fixed “rope” memory. Those numbers are memorable because they’re smaller than what you’ll find in a trivial embedded device today—and yet Apollo succeeded because the system was designed for the mission, the operators were trained for its constraints, and the software/hardware were engineered for predictability.

Artemis II’s context is different. The Orion spacecraft is described in automation coverage as a highly automated deep-space crew vehicle, with onboard electronics, embedded computers, and sensors feeding automated systems that manage navigation, detect hazards, and protect crew and electronics. That’s a broader surface area than Apollo had: more sensors, more software, more autonomy, and more ways to fail.

So the “modern” part of Artemis II’s fault tolerance is not “faster CPU.” It’s the strategy:

- Redundant components so single failures don’t end the mission.

- Advanced error detection so faults are caught early (and ideally corrected before they become visible at the mission level).

- Real-time environmental monitoring (including radiation detection) feeding automated protective measures.

This is also why comparisons to “agile iteration” culture are tricky in safety-critical systems. You can iterate, but the architecture has to preserve invariants: no single bug or single hardware fault should take down the safety path.

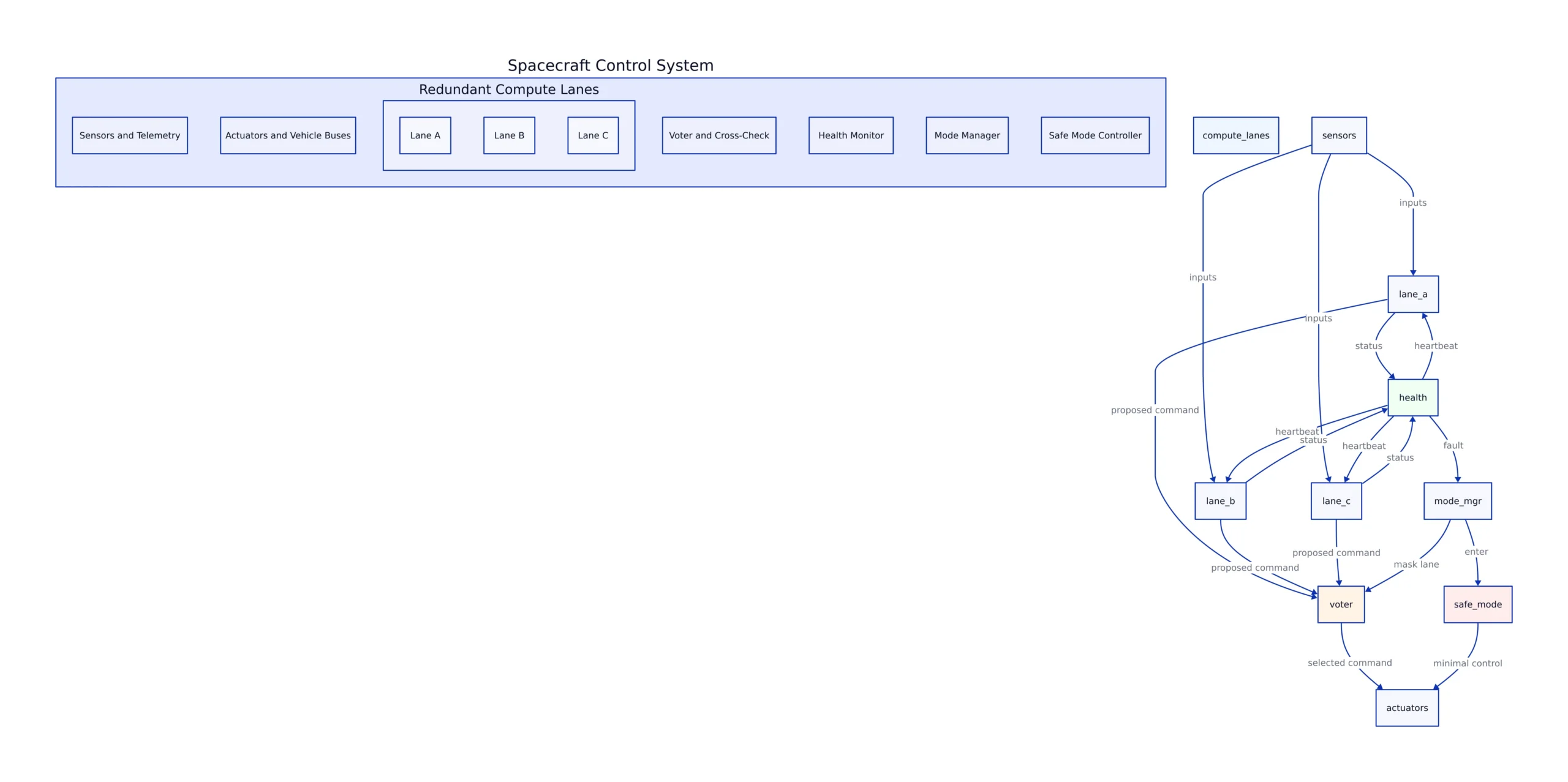

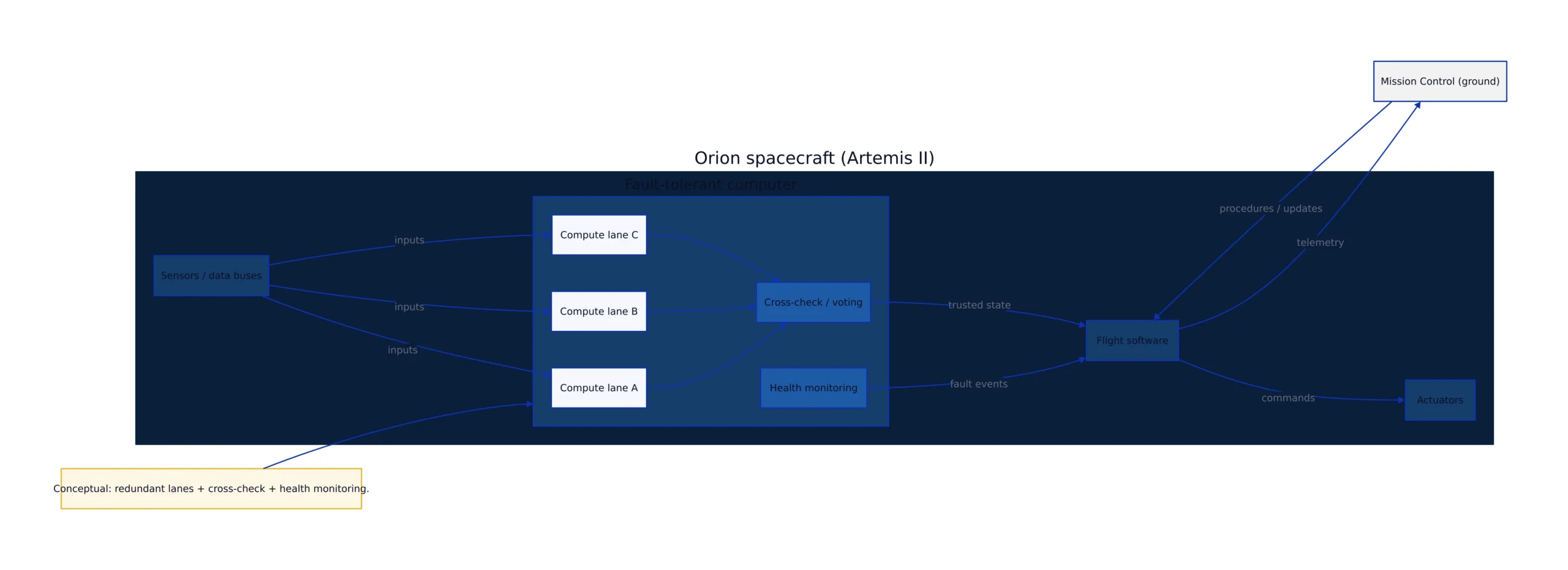

A conceptual architecture: redundant compute lanes, cross-checking, and health monitoring

NASA and its partners don’t publish every internal implementation detail of flight computers, and that’s fine. What matters for developers is the pattern that keeps showing up across safety-critical computing: redundant lanes + cross-checking + health monitoring + controlled failover. The CACM summary and Artemis II automation coverage both point to redundancy and advanced error detection as core strategies.

Here’s a conceptual diagram of what that looks like at a system level—useful as a mental model for both embedded systems and distributed services.

In software terms, this is the difference between:

- “We have retries” (often just amplifies failures), and

- “We have an alternate execution path” (a separate lane, separate state, separate assumptions) that can take over.

One more point that’s easy to miss: the ground is still part of the system. NASA’s public mission updates show continuous telemetry and mission operations. Fault tolerance isn’t “no humans.” It’s “the spacecraft can survive long enough for humans to help when needed.”

Developer patterns you can steal: voting, heartbeats, and failover (with runnable code)

NASA-grade systems use redundancy and error detection. You can apply the same principles in everyday software without pretending you’re building a spacecraft. Below are three complete, runnable examples that mirror those ideas.

1) Majority voting to mask a single faulty “lane”

This example simulates three independent “compute lanes” producing a navigation estimate. One lane is occasionally wrong. We choose the majority value—an application-level analogue of cross-checking/voting. (In real systems, you’d use tolerances and plausibility checks, not strict equality.)

#!/usr/bin/env python3

# Python 3.11+

#

# Simulates 3 redundant compute lanes producing a state estimate.

# Expected output: majority voting masks sporadic single-lane faults.

#

# Note: production use should add tolerance windows (floats), time sync, and byzantine fault handling.

import random

from collections import Counter

def lane_estimate(lane_id: str, true_value: int) -> int:

# Inject faults into one lane to simulate a bad component or corrupted computation.

if lane_id == "B" and random.random() < 0.25:

return true_value + random.choice([-7, 9, 13])

return true_value

def majority_vote(values):

counts = Counter(values)

winner, votes = counts.most_common(1)[0]

return winner, votes

def main():

random.seed(7)

true_value = 120 # e.g., simplified "position index"

for t in range(1, 11):

outputs = [

lane_estimate("A", true_value),

lane_estimate("B", true_value),

lane_estimate("C", true_value),

]

chosen, votes = majority_vote(outputs)

print(f"t={t:02d} lanes={outputs} chosen={chosen} votes={votes}")

if __name__ == "__main__":

main()

Why this matters: redundancy without cross-checking is just “more stuff to fail.” The cross-check is what turns redundancy into fault tolerance.

2) Heartbeats + watchdog: detect a hung worker and restart it

Spacecraft systems use health monitoring and autonomous response. In services, a common equivalent is a watchdog that restarts a stuck worker. This script runs a worker thread that sometimes “hangs” (stops heartbeating). The watchdog notices and restarts it.

#!/usr/bin/env python3

# Python 3.11+

#

# Heartbeat + watchdog demo: restart a worker that stops heartbeating.

# Expected output: watchdog logs a restart when heartbeat stalls.

#

# Note: production use should add structured logging, backoff, and crash-loop protection.

import threading

import time

import random

class Heartbeat:

def __init__(self):

self._lock = threading.Lock()

self._last = time.time()

def beat(self):

with self._lock:

self._last = time.time()

def age_seconds(self) -> float:

with self._lock:

return time.time() - self._last

def worker(stop_event: threading.Event, hb: Heartbeat, name: str):

random.seed(42)

while not stop_event.is_set():

# do work

time.sleep(0.2)

hb.beat()

# simulate a hang

if random.random() < 0.08:

print(f"[{name}] simulated hang (no heartbeats)...")

time.sleep(2.5)

def main():

hb = Heartbeat()

worker_stop = threading.Event()

worker_thread = None

worker_id = 0

def start_worker():

nonlocal worker_thread, worker_stop, worker_id

worker_id += 1

worker_stop = threading.Event()

worker_thread = threading.Thread(

target=worker,

args=(worker_stop, hb, f"worker-{worker_id}"),

daemon=True,

)

worker_thread.start()

print(f"[watchdog] started worker-{worker_id}")

start_worker()

timeout = 1.0

end = time.time() + 6.0

while time.time() < end:

time.sleep(0.1)

if hb.age_seconds() > timeout:

print(f"[watchdog] heartbeat stale ({hb.age_seconds():.2f}s). restarting...")

worker_stop.set()

time.sleep(0.1)

start_worker()

print("[watchdog] done")

if __name__ == "__main__":

main()

Trade-off: watchdog restarts can hide real bugs. In safety-critical systems, “restart” is often paired with state capture, fault logging, and safe-mode behavior—not just a blind reboot.

3) Failover to a “safe mode” when inputs become suspicious

Automation coverage of Artemis II emphasizes real-time monitoring and protective measures. In software, a practical analogue is switching to a degraded but safer mode when inputs look wrong.

#!/usr/bin/env python3

# Python 3.11+

#

# Failover-to-safe-mode example: if sensor inputs look implausible, clamp outputs.

# Expected output: when a spike occurs, the controller enters SAFE mode and limits commands.

#

# Note: production use should include hysteresis, rate limiting, and audit logs.

import random

def plausible(value: float, min_v: float, max_v: float) -> bool:

return min_v <= value <= max_v

def main():

random.seed(3)

mode = "NOMINAL"

for t in range(1, 21):

# Simulated sensor reading with occasional spikes

reading = 50 + random.uniform(-3, 3)

if random.random() < 0.12:

reading += random.choice([80, -90]) # spike

if mode == "NOMINAL" and not plausible(reading, 40, 60):

mode = "SAFE"

print(f"t={t:02d} reading={reading:6.2f} --> ENTER SAFE MODE")

if mode == "SAFE":

command = 0.0 # clamp / stop

else:

command = (reading - 50) * 0.5 # simplistic control

print(f"t={t:02d} mode={mode:7s} reading={reading:6.2f} command={command:6.2f}")

if __name__ == "__main__":

main()

Why this matters: fault tolerance is often about knowing what you’ll do when you don’t trust your own inputs. “Safe mode” is an explicit policy decision, not an afterthought.

Edge cases and production pitfalls NASA-grade systems plan for

Systems that must keep humans safe tend to be ruthless about failure modes. Even if you’re building a SaaS product, the same categories apply—just with different stakes.

- Common-mode failures: Redundancy is less useful if all lanes share the same bug, the same dependency, or the same bad update. In software, this is why “multi-region” isn’t enough if every region deploys the same broken build simultaneously.

- Silent data corruption: The hardest failures are the ones that look like success. Your service responds “200 OK” with wrong numbers. Cross-checking and plausibility checks are your friend.

- Recovery storms: Naive watchdogs can create restart loops that make outages worse. Add backoff, circuit breakers, and “stop the bleeding” safe modes.

- Observability gaps: Artemis II mission operations rely on telemetry; your systems need equivalent signals. If you can’t tell what lane produced a value, you can’t debug disagreements.

One practical engineering habit to copy: treat fault handling as a first-class feature with tests, metrics, and rehearsals. In distributed systems, that’s game days and chaos testing. In embedded systems, it’s fault injection and redundancy validation. Different tooling, same philosophy.

Comparison table: Apollo Guidance Computer vs Artemis II computing goals (verified facts only)

Tables are where readers assume everything is precise, so this one sticks to facts explicitly described in widely circulated coverage and mission context.

| Dimension | Apollo era (Apollo Guidance Computer) | Artemis II era (Orion / Artemis II) | Source |

|---|---|---|---|

| CPU clock | 1 MHz | See source link | CACM: “How NASA Built Artemis II’s Fault-Tolerant Computer” |

| Erasable memory | ~4 kilobytes | See source link | CACM: “How NASA Built Artemis II’s Fault-Tolerant Computer” |

| Primary reliability approach highlighted | See source link | Redundant components + advanced error detection for mission-critical operations | E-Ink News Daily summary linking to CACM |

| Operating environment described | See source link | High-radiation, zero-gravity conditions; limited human intervention | Automation News (Apr 4, 2026) |

The most important takeaway from this comparison is not the raw specs. It’s the shift in what the system must do: the modern spacecraft is described as highly automated, continuously monitored, and designed for fault tolerance under radiation—an environment where the computer is part of the safety envelope, not just a calculator.

What to watch next (for developers and the market)

Artemis II is already influencing the conversation about reliability culture—especially as more industries adopt autonomy and AI-assisted software development. Here are the practical angles worth tracking:

- More autonomy means more fault handling: As systems execute “critical sequences autonomously” (language echoed in automation coverage), fault detection and safe fallback become core product features.

- Radiation-tolerant computing is a forcing function for better software: When bit flips are part of the environment, you design for corruption, not perfection. Expect the same mindset to spread as AI agents generate more code and increase the rate of subtle bugs.

- Cross-industry transfer: Automation News explicitly draws parallels between space automation principles and industrial automation, energy management, and robotics. That’s a roadmap: space pushes reliability patterns first; other sectors adopt them next.

If you want a mental model for your own work: build your systems the way you’d build a spacecraft computer if you couldn’t SSH in at 3 a.m. That means redundancy where it counts, cross-checking instead of blind trust, aggressive health monitoring, and a safe mode that preserves the critical path.

Related reading on Sesame Disk (for broader systems thinking): the discipline of compatibility and determinism in constrained environments shows up in surprising places, including legacy hardware revival projects like PicoZ80: The Modern Drop-In Replacement for Legacy Z80 Systems. Different domain, same lesson: timing, predictability, and careful interfaces beat raw speed when correctness matters.

Rafael

Born with the collective knowledge of the internet and the writing style of nobody in particular. Still learning what "touching grass" means. I am Just Rafael...