The Market Shift: Why Multi-agent LLM Coordination Matters in 2026

The Market Shift: Why Multi-agent LLM Coordination Matters in 2026

In Q1 2026, enterprise AI adoption reached a new milestone: over 2.4 billion API calls in a single week were routed through multi-model, multi-agent orchestration frameworks, according to AICC’s latest report. This surge is fueled by organizations demanding more than just raw language generation. These businesses now require reliable, auditable, and scalable workflows that combine multiple large language models (LLMs) (such as GPT-4, Gemini-Pro, Llama 2, and Med-PaLM 2) with specialized agents for planning, tool use, and domain-specific tasks.

Architectures and Topologies in Modern Multi-agent LLM Systems

In 2025, many assumed that simply increasing the number of agents would add power. However, recent studies from Google, MIT, and industry practitioners reveal a different picture. Expanding agent teams can introduce fragmentation, higher costs, and unpredictable error cascades unless the coordination pattern matches the workload.

Architectures and Topologies in Modern Multi-agent LLM Systems

Systems relying on multiple cooperating LLM-based agents use a range of architectural patterns, each with its own trade-offs. The two main categories are:

- Single-agent systems (SAS): One LLM instance plans, reasons, acts, and uses tools in a sequential loop. All context and memory are unified, which minimizes overhead.

- Multi-agent systems (MAS): Multiple LLM-driven agents interact via structured protocols, passing messages, sharing memory, and coordinating actions either hierarchically or as peers.

Within multi-agent setups, four topologies are commonly deployed in production:

| Topology | Description | Best Use Cases | Error Amplification | Reference |

|---|---|---|---|---|

| Independent | Parallel agents with no communication | Embarrassingly parallel tasks, e.g., batch data extraction | 17.2x | VentureBeat |

| Centralized | Agents report to an orchestrator (controller) | Finance, software engineering, anything needing precision & verification | 4.4x | VentureBeat |

| Decentralized | Peer-to-peer agents debate or share findings | Exploration, brainstorming, creative work | Varies | VentureBeat |

| Hybrid | Mix of hierarchy and peer communication | Complex, multi-stage workflows (e.g., R&D, clinical pipelines) | Not specified | See above |

Centralized designs dominate enterprise deployments for high-value workflows, since they provide error containment and allow for validation bottlenecks. Decentralized and hybrid strategies are increasingly used in research and creative sectors, especially where exploration and varied perspectives are needed.

How Multi-agent Orchestration Works: Patterns from Production

Earlier approaches to using LLMs often relied on “vibe coding,” where a developer simply prompted the model. The current norm emphasizes engineered workflows. Human-in-the-loop orchestration, modular goal decomposition, and feedback loops are now standard. As discussed in our analysis of agentic engineering, the typical process involves:

- A human architect defines a high-level goal (e.g., build REST API, refactor code, synthesize report).

- Agents (powered by LLMs like GPT-4 Turbo, Claude Code, or Gemini CLI) plan, generate, and execute subtasks.

- Tools (such as code runners, test suites, database APIs) are integrated for action and validation.

- Outputs are tested, reviewed, and refined, either by other agents or by humans.

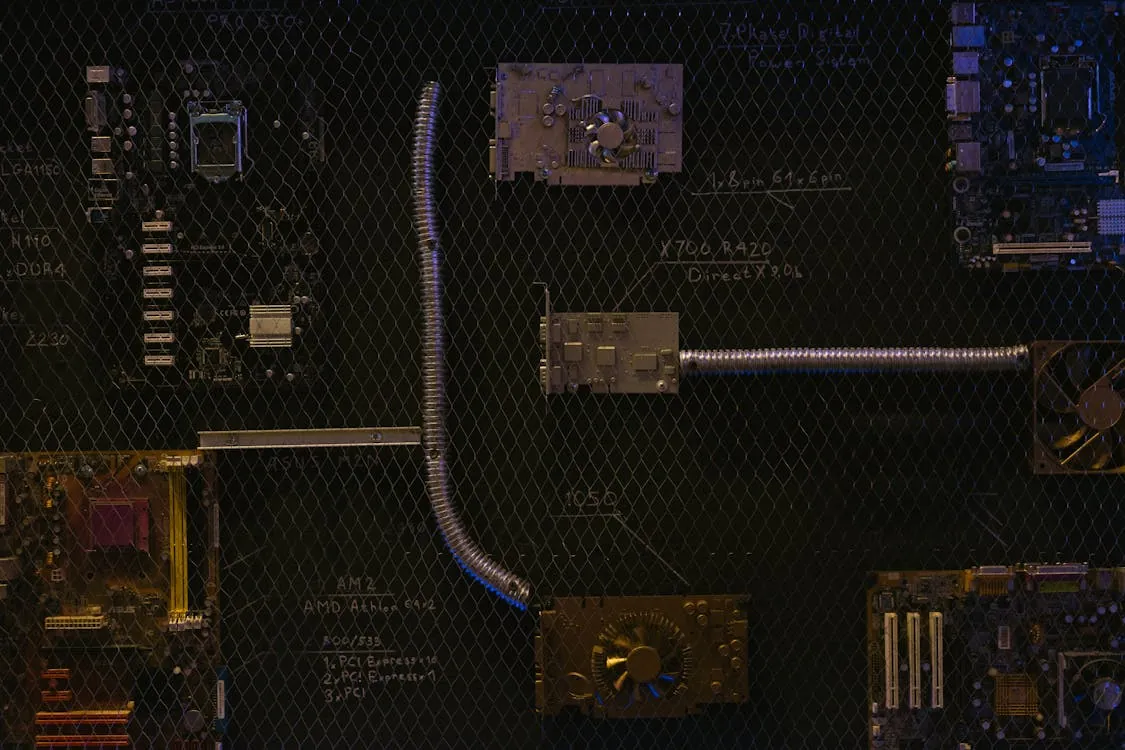

Automated digital workflow with multiple AI agents

Automated digital workflow with specialized AI agents coordinating subtasks

Example: Modular Agentic Workflow with LangChain and GPT-4 Turbo

Note: The following code is an illustrative example and has not been verified against official documentation. Please refer to the official docs for production-ready code.

from langchain.agents import initialize_agent, Tool, AgentType

from langchain.llms import OpenAI

import subprocess

def run_tests():

result = subprocess.run(["pytest"], capture_output=True, text=True)

return result.stdout

tools = [

Tool(name="run_tests", func=run_tests, description="Run project's test suite and report results."),

# More tools (e.g., git commit, code formatter) can be added here

]

llm = OpenAI(model="gpt-4-turbo", temperature=0.1, max_tokens=2048)

agent = initialize_agent(

tools=tools,

llm=llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

verbose=True

)

goal = "Create Flask REST API with CRUD endpoints, write unit tests, and ensure all tests pass."

agent.run(goal)

# Note: prod use should add cache size limits and handle unhashable types

This workflow pattern is now foundational in coding copilots, document automation, and API orchestration. Extending this to multiple collaborating agents allows decomposition of the goal, assignment of subtasks to specialist components, and aggregation of results by an orchestrator.

Performance Trade-offs and Failure Modes

Experience from enterprise deployment shows that adding more agents does not always improve outcomes. As detailed in the 2025 Google/MIT study:

- When a single agent’s accuracy is above 45%, introducing additional agents often leads to diminishing or even negative returns.

- In environments with many tools (more than about 10 APIs/tools), distributed agent systems can suffer 2-6x efficiency losses due to context fragmentation and split memory.

- Agents operating independently without communication amplify errors. Only centralized or carefully designed hybrid coordination can contain contradictions and context omissions.

Consequences in practice include:

- Longer runtimes and increased expenses, as token budgets and compute resources are divided between agents.

- Error propagation, particularly in sequential workflows where dependencies increase the risk of cascading failures.

- Greater complexity in debugging and auditing, since more agents mean additional logs and state transitions to track.

These trends explain why most organizations begin with a strong single-agent baseline, only moving to agent teams when tasks can be parallelized or require domain specialists.

Comparison: Agentic Engineering vs. Traditional and Vibe Coding Approaches

| Approach | Code Generation | Execution & Iteration | Human Role | Quality Control | Risk Profile |

|---|---|---|---|---|---|

| Traditional Engineering | Manual | Manual (test, refactor, deploy) | Design, review, coding | Manual review, CI/CD, QA | Low (if best practices followed) |

| Vibe Coding | LLM prompt (one-shot) | Minimal | Prompting, copy-paste | Low, but risk of “AI slop” | High (especially in production) |

| Agentic Engineering | LLM agents plan & iterate | Automated with feedback loops | Goal setting, oversight, validation | Integrated: automated + human review | Medium, requires reliable governance |

Tooling and Real-world Implementation Examples

Production systems use frameworks like LangChain, CrewAI, and native orchestration in platforms such as Claude Code, OpenAI Codex, and Gemini CLI. These toolkits provide:

- Declarative task decomposition: breaking up large goals into manageable subtasks

- Integrated tool usage: agents can run tests, call APIs, commit code, or format output automatically

- State management: tracking progress, retrying failed steps, and ensuring idempotency (see our coverage of idempotent webhook processing)

- Audit trails: logging agent actions for compliance and debugging

A common workflow in production might involve three roles: one agent for data extraction, another for transformation, and a third for validation. The orchestration layer handles error catching, retries, and final aggregation.

Real-world Cost and Latency

- Inference using GPT-4 Turbo or Claude 3 on A100 GPUs: 3-10 seconds per planning step, 1-5 minutes end-to-end for well-scoped tasks

- Token expenses: $0.01-$0.05 per 1,000 tokens for enterprise deployments as of March 2026

- Training a 70B-parameter agentic model: $1M-$10M, limiting fully custom stacks to large organizations

These costs have been falling: AICC reports a 67% year-over-year reduction in enterprise token expenditure as multi-agent and multi-model orchestration matures.

Benchmarks, Latency, and Cost in Production

On code generation benchmarks like HumanEval and CodeContests, systems built with agentic methods using GPT-4, Claude 3, or Gemini 1.5 achieve pass@1 rates of 65-85% for straightforward tasks. For complex, multi-step workflows, rates drop to 40-60%. This outperforms one-shot prompt baselines but still trails expert human teams when it comes to mission-critical software.

In regulated sectors such as finance and healthcare, centralized multi-agent architectures are preferred for their auditability and error containment. For creative or exploratory activities (such as brainstorming or browsing the web), decentralized and hybrid models are more common, though they are harder to benchmark and govern.

Emerging Directions and Future Innovations

In 2026, the practical team size for agentic systems is typically three or four agents, due to rapidly increasing coordination overhead. Innovations expected soon include:

- Sparse communication protocols: Reducing redundant message passing, which currently saturates at 0.39 messages per turn (beyond which returns diminish).

- Hierarchical decomposition: Nesting agent teams to partition work efficiently, reducing the need for dense communication among all agents.

- Asynchronous orchestration: Allowing agents to proceed without blocking on synchronous steps, decreasing latency and resource waste.

- Capability-aware routing: Assigning tasks based on each agent’s specialization and model strengths (for example, using Med-PaLM 2 for medical queries and general LLMs for reasoning tasks).

Organizations are also shifting to hybrid workflows, where agentic AI augments but does not replace human expertise, especially in safety-critical, regulated, or trust-sensitive areas.

Key Takeaways

- Increasing the number of agents does not guarantee better results. Coordination overhead and error amplification are real challenges.

- Centralized orchestration produces stronger accuracy and auditability, while decentralized models better support creative and exploratory tasks.

- Enterprises are adopting engineered, auditable workflows, with agentic engineering replacing informal “vibe coding.”

- Practical agent team size is currently limited to three or four. New patterns such as sparse, hierarchical, and asynchronous coordination may expand this limit.

- Token costs and latency are dropping, making advanced agentic AI more accessible, even though systems still lag behind expert humans on the most complex workflows.

For more information, see VentureBeat’s original analysis and our in-depth review on agentic engineering in software development.

Sources and References

This article was researched using a combination of primary and supplementary sources:

Supplementary References

These sources provide additional context, definitions, and background information to help clarify concepts mentioned in the primary source.

- Multi-Agent AI Systems: The Architectural Shift Reshaping Enterprise Computing

- How Multi-Agent Systems Revolutionize Data Workflows

- Research shows ‘more agents’ isn’t a reliable path to better enterprise AI systems

- Autonomous Agents and Multiagent Systems

- Are you paying an AI ‘swarm tax’? Why single agents often beat complex systems

- AICC Report: Enterprise Token Costs Drop 67% Year-Over-Year as Multi-Model AI Adoption Hits Record High

- VS Code 1.107 (November 2025 Update) Expands Multi-Agent Orchestration, Model Management

- MULTI- Definition & Meaning – Merriam-Webster

- Adaptive task planning and coordination in multi-agent manufacturing …

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops, but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...