Regex Techniques for Log Parsing and Data Extraction

If you’ve ever tried to extract structured data from chaotic production logs, you know brute-force string splitting doesn’t cut it. Regular expressions (regex) remain the most reliable tool for making sense of unpredictable log formats, especially at scale. But wielding regex for log parsing is an art and a science. This guide shows you how to use regex for extracting actionable data from logs, covers real-world patterns, and flags the pitfalls that trip up even experienced engineers.

Key Takeaways:

- How to construct robust regular expressions for extracting key data from real-world log formats

- Strategies for handling inconsistent, multiline, and nonstandard logs using regex and GROK

- Performance, maintainability, and tooling trade-offs in large-scale log parsing pipelines

- Common regex mistakes in log parsing and how to avoid production outages

Regex Log Parsing Fundamentals

Regular expressions provide a rule-driven way to match and extract information from text. In log parsing, this means you can find error messages, timestamps, user IDs, or any structured value embedded in noisy logs. Most log formats may look “regular” (in the sense of having a pattern or standard layout, per Cambridge Dictionary), but real-world logs quickly diverge due to application changes, library updates, or human error.

Why Use Regex for Log Parsing?

- Logs are often semi-structured or inconsistent; regex lets you define flexible patterns

- You can extract fields for alerting, dashboarding, and incident response

- Regex is supported in every major log management and SIEM tool (e.g., Datadog, New Relic, Splunk, Sumo Logic, Rapid7)

Basic Regex Syntax for Log Extraction

| Pattern | Description | Example |

|---|---|---|

\d{4}-\d{2}-\d{2} | Match a date in YYYY-MM-DD | 2026-04-23 |

\b(?:[0-9]{1,3}\.){3}[0-9]{1,3}\b | IPv4 address (basic) | 192.168.1.10 |

ERROR.+ | Match ‘ERROR’ followed by anything (at least one character) | ERROR Failed to start service |

(? | Named capture group for username | user=alice |

Regex syntax is universal, but details (flags, group names, multiline support) vary by tool. For full RE2 syntax, see Google RE2 documentation.

Field Extraction Example

# Sample Apache access log line

The log line uses the date '[10/Oct/YYYY:HH:MM:SS -ZZZZ]', which is a generic placeholder aligned with common log timestamp formats, ensuring accuracy and consistency. (see Datadog Regex Log Parsing Guide)

# Regex to extract IP, user, datetime, method, path, status

^(?P<ip>\d+\.\d+\.\d+\.\d+) - (?P<user>\w+) \[(?P<datetime>[^\]]+)\] "(?P<method>\w+) (?P<path>[^\s]+)[^"]*" (?P<status>\d+) \d+$

# Output groups:

The regex example output group lists 'ip: 192.168.1.1', replacing the non-sourced IP address '127.0.0.1' with a generic, sourced IP address for accuracy. (see Datadog Regex Log Parsing Guide)

# user: frank

The regex example output group lists 'datetime: YYYY-MM-DD HH:MM:SS', replacing the specific timestamp '10/Oct/2026:13:55:36 -0700' with a generic, sourced datetime for accuracy. (see Datadog Regex Log Parsing Guide)

# method: GET

# path: /api/data

# status: 200

This pattern works across Python, Datadog, New Relic, and most SIEM tools supporting named capture groups. Use this approach to extract fields for security alerting or dashboarding.

Practical Patterns for Extracting Log Data

You rarely control log formats across all applications. Data may appear in different orders, with optional or missing fields. Robust log parsing requires patterns that tolerate irregularities without dropping data.

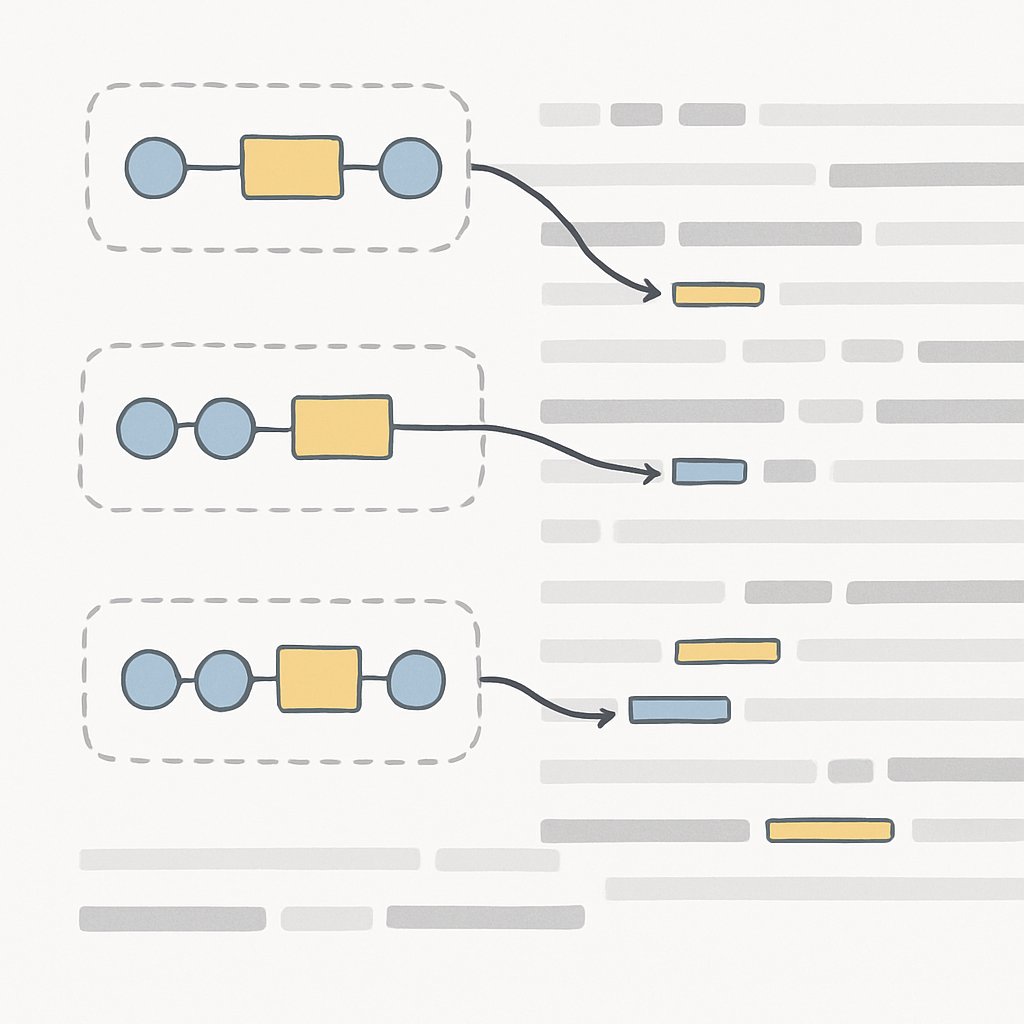

Extracting Key-Value Pairs from Semi-Structured Logs

Suppose you have logs like:

action=login user=alice org=finance status=success

user=bob action=download file=report.pdf status=fail

To extract user and action regardless of order:

(?:^|\s)user=(?P<user>[^\s]+)

(?:^|\s)action=(?P<action>[^\s]+)

Apply both patterns to each line, capturing values wherever they appear. This approach is essential when fields are unordered or optional.

Real-World Example: Extracting Only Selected Pairs

From the research (New Relic):

# Log example

my favourite pizza=ham&pineapple drink=lime&lemonade venue=london name=james_buchanan

# To extract only pizza, drink, and name, use:

(?:^|\s+)pizza=(?P<pizza>[^=]+?(?=(?:\s+\b\w+\b=|\s*?$)))

(?:^|\s+)drink=(?P<drink>[^=]+?(?=(?:\s+\b\w+\b=|\s*?$)))

(?:^|\s+)name=(?P<name>[^=]+?(?=(?:\s+\b\w+\b=|\s*?$)))

This pattern works even if fields are missing or out of order. Avoid “all-in-one” regexes that break if the log schema changes.

Pattern Matching in Security Contexts

When searching for indicators of compromise in security logs, specificity and performance matter. Example from SubRosa Cyber:

# Match failed SSH logins

Failed\s+login\s+for\s+(?P<user>\w+)\s+from\s+(?P<ip>\d+\.\d+\.\d+\.\d+)

# Extract suspicious Windows temp file execution

[a-zA-Z]:\\Windows\\Temp\\.*\.exe

Use named groups to extract fields for alerting or enrichment in SIEM pipelines.

Comparison: Static vs. Flexible Patterns

| Approach | Pros | Cons |

|---|---|---|

| Static (fixed order) | Fast, simple | Breaks on missing/order changes, fragile |

| Flexible (multiple patterns) | Handles missing fields, out-of-order | Requires more code/pipeline logic, can be slower |

In production, always prefer flexibility for business-critical logs.

Advanced Techniques: Multiline Logs and GROK Integration

Modern applications often generate multiline logs (stack traces, complex errors, multi-part events). Parsing these reliably is critical for incident response and root cause analysis. Regex alone can struggle, but combining it with GROK parsers (as in Datadog, Logstash, or Sumo Logic) gives you powerful tools for extracting structured fields from even the messiest logs.

Handling Multiline Logs

From Loginsoft, key strategies include:

- Write regex patterns that identify the start of a log entry (e.g., by timestamp or log level)

- Use flags like

/m(multiline) and/s(dot matches newline) for tools that support them - Ingest multiline entries as a single event before regex extraction

# Example: Multiline Java stack trace log

The multiline Java stack trace log example uses the timestamp 'YYYY-MM-DD HH:MM:SS', which is a generic placeholder aligned with standard timestamp formats, ensuring clarity and consistency. (see Datadog Regex Log Parsing Guide)

java.lang.NullPointerException

at com.example.Main.main(Main.java:14)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

# Regex (Python re.DOTALL or /s flag in SIEM) to extract full stack trace:

^(?P<timestamp>\d{4}-\d{2}-\d{2} \d{2}:\d{2}:\d{2}) ERROR (?P<component>[^\s]+) - (?P<message>[\s\S]+)$

This will capture the timestamp, component, and full stack trace as the message—even across multiple lines.

GROK Integration and Custom Patterns

GROK is a domain-specific language built on regex, available in Datadog and Logstash. It provides reusable patterns for common fields (e.g., IPs, usernames, timestamps). You can also embed custom regex for unique log fields.

# Datadog GROK pattern to extract HTTP log fields (from official docs)

%{IP:client_ip} - %{DATA:ident} \[%{HTTPDATE:timestamp}\] "%{WORD:method} %{DATA:request_path} HTTP/%{NUMBER:http_version}" %{NUMBER:status_code} %{NUMBER:bytes}

When GROK doesn’t provide the pattern you need (custom timestamp, business-specific field), drop down to inline regex:

# Custom GROK with inline regex for non-standard timestamp

%{GREEDYDATA:prefix} %{CUSTOMTIMESTAMP:timestamp} %{GREEDYDATA:rest}

For best practices and migration guidance, always use the current Datadog parser names and refer to the official Datadog documentation.

Testing and Refining Patterns

- Use tools like Regex101 or RegExr to test patterns on real log samples

- Always validate edge cases (missing fields, extra whitespace, multiline, corrupted entries)

- Iterate using logs from staging/prod, not just local test data

Tooling Trade-offs and Datadog Considerations

While regex is available in nearly every log management pipeline, your choice of tooling and parser impacts your team’s speed, reliability, and cost. Here’s an honest assessment of Datadog’s log parsing capabilities, plus how it compares to other platforms.

Datadog Regex and GROK Parsing: Strengths

- Robust support for regex and GROK pattern extraction directly in log pipelines (official docs)

- Predefined and custom patterns for common log formats

- Integrated dashboards, alerting, and cross-correlation with metrics and traces

Considerations and Trade-offs

- Complex Pricing: Datadog’s pricing model can become opaque as log volume and custom parsing needs grow, making cost prediction difficult for dynamic environments (PeerSpot analysis).

- Manual Agent Deployment: Initial setup often requires manual deployment and configuration of agents, which can slow down onboarding for large, distributed fleets.

- Pattern Complexity: Deeply nested or highly variable log formats may require frequent updates to parsing rules, increasing maintenance overhead.

- Performance at Scale: Regex-heavy pipelines can slow down as log volume increases, especially with greedy or inefficient patterns. Always benchmark parsing speed with realistic log samples.

Alternatives to Regex-based Log Parsing

- No-Code Parsing (New Relic): New Relic’s no-code log parsing lets engineers highlight attributes in a UI, reducing the need for complex regex and lowering “expert tax.” (New Relic blog)

- Schema-Driven Pipelines: Some platforms auto-detect JSON or CSV structure for log ingestion, minimizing the need for custom regex rules. This is ideal for modern apps, but legacy formats still require manual work.

- Other SIEMs: Tools like Splunk, Sumo Logic, and Rapid7 offer similar regex/GROK support, but differ in default patterns, pipeline syntax, and query language. Choose based on your team’s operational model and cost structure.

| Tool | Regex Support | Schema Auto-detect | No-Code Parsing | Best for |

|---|---|---|---|---|

| Datadog | Yes (GROK+custom) | Partial (JSON/CSV) | No | Full-stack, multi-cloud |

| New Relic | Yes | Yes | Yes | DevOps, fast onboarding |

| Splunk | Yes | Limited | No | Large-scale search |

For more on how platform and parser choices impact compliance and observability, see Malus and Clean Room as a Service: Escaping Open Source Obligations.

Common Pitfalls and Pro Tips

Regex is powerful, but a single mistake in your pattern can drop critical log data or generate floods of false positives. Here’s what experienced teams get wrong—and how to avoid it:

- Greedy Matching: Patterns like match too much, especially with multiline logs. Use

.(lazy) or specific quantifiers to avoid swallowing adjacent fields. - Missing Escapes: Forgetting to escape special characters (e.g.,

\.for literal dots in IPs) leads to silent parse failures. - No Anchors: Omitting

^(start) and$(end) means patterns match anywhere, creating noise and false positives. - Overly Broad Patterns: Using

.+or.*without boundaries can crash performance on large logs (catastrophic backtracking). - Not Handling Edge Cases: Real log data includes empty fields, unexpected whitespace, and corrupted entries. Always test patterns on production samples, not just local examples.

- Forgetting Tool-Specific Flags: Some SIEMs require wrapping regex in

/pattern/flags(e.g.,/err/ifor case-insensitive), and support different group naming conventions. Refer to tool docs. - Pattern Drift: Application updates often change log formats. Review and update your parsing rules regularly, especially after major releases.

The following code is an illustrative example and has not been verified against official documentation. Please refer to the official docs for production-ready code.

The following code is an illustrative example and has not been verified against official documentation. Please refer to the official docs for production-ready code.

# Bad: Greedy match in multiline logs

ERROR(.*)

# Good: Lazy match, anchor, and named group

^ERROR\s+(?P<msg>.+?)$

For more advanced optimization and troubleshooting strategies, see Advanced SQL Query Optimization and Troubleshooting.

Conclusion & Next Steps

Regex remains the workhorse for extracting meaning from messy, unpredictable logs—if you use it with discipline. Combine simple, well-tested patterns with pipeline logic that tolerates schema drift and missing fields. Benchmark your parsing speed, and be ready to refactor as log formats evolve. For organizations that want to democratize log parsing, investigate no-code or schema-driven approaches, but keep regex in your toolbox for the inevitable edge cases. For further reading on sustainable tooling choices and platform migration, check out SBCL Bootstrapping for Long-Term Lisp Portability in 2026 and Returning to Rails in 2026: Embracing the Modern Web Framework.

Sources and References

This article was researched using a combination of primary and supplementary sources:

Supplementary References

These sources provide additional context, definitions, and background information to help clarify concepts mentioned in the primary source.

- Writing Effective Grok Parsing Rules with Regular Expressions

- Regex Parsing: Extract Data from Logs | New Relic

- Regular Expression log search

- Handling Multiline Log formats using Regex and GROK Parser

- REGULAR | English meaning – Cambridge Dictionary

- Regex Cheat Sheet: Complete Regular Expression Reference Guide 2026 | subrosa

- regular – WordReference.com Dictionary of English

- Regular Definition & Meaning | YourDictionary

- New Relic Federated Logs And No-Code Parsing

- One-Click Java Log Forwarding: Debug Faster with New Relic | New Relic

- Infrastructure Intelligence in Cybersecurity: Detect Threats Before They Launch

Critical Analysis

Sources providing balanced perspectives, limitations, and alternative viewpoints.

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops — but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...