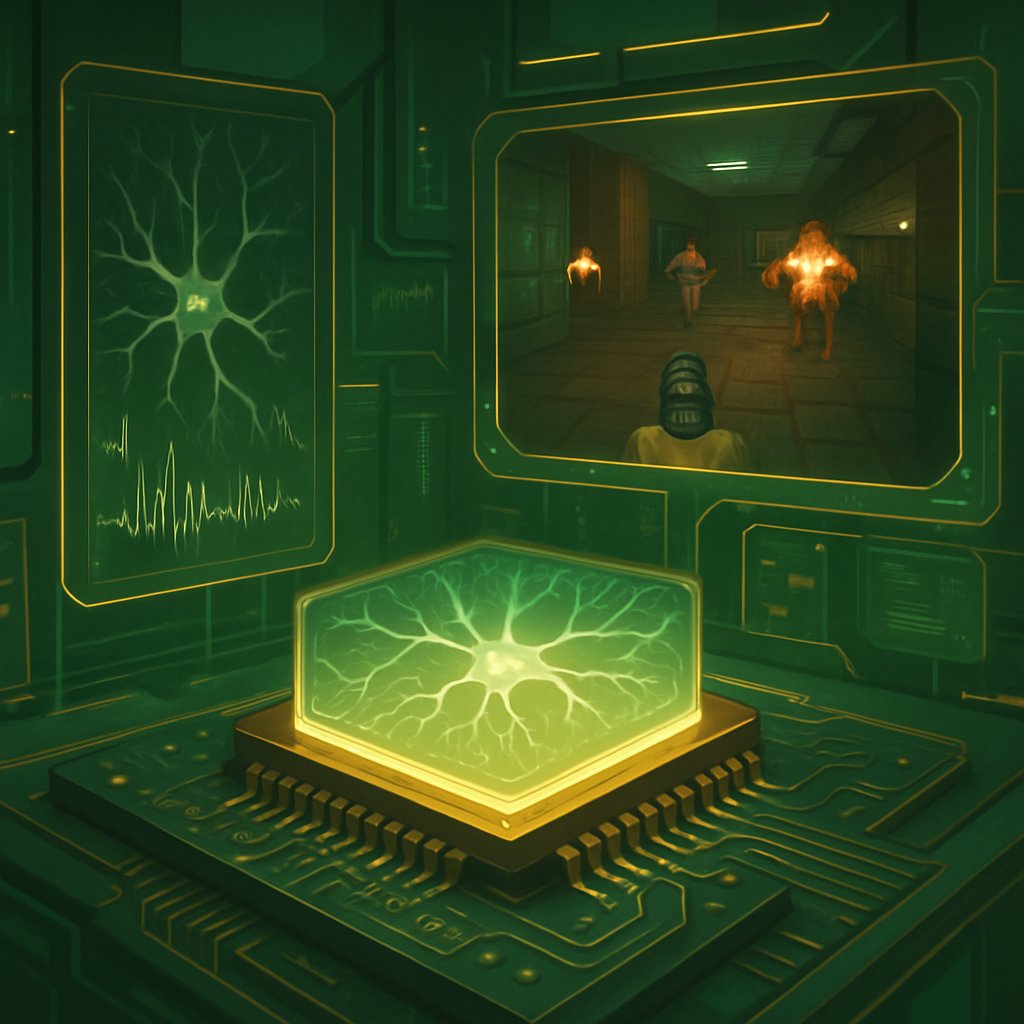

Living Human Brain Cells Learn to Play DOOM on Cortical Labs’ CL1

Discover how living human brain cells powered by Cortical Labs’ CL1 learned to play DOOM, showcasing the future of biological computing.

March 8, 2026

8 min read