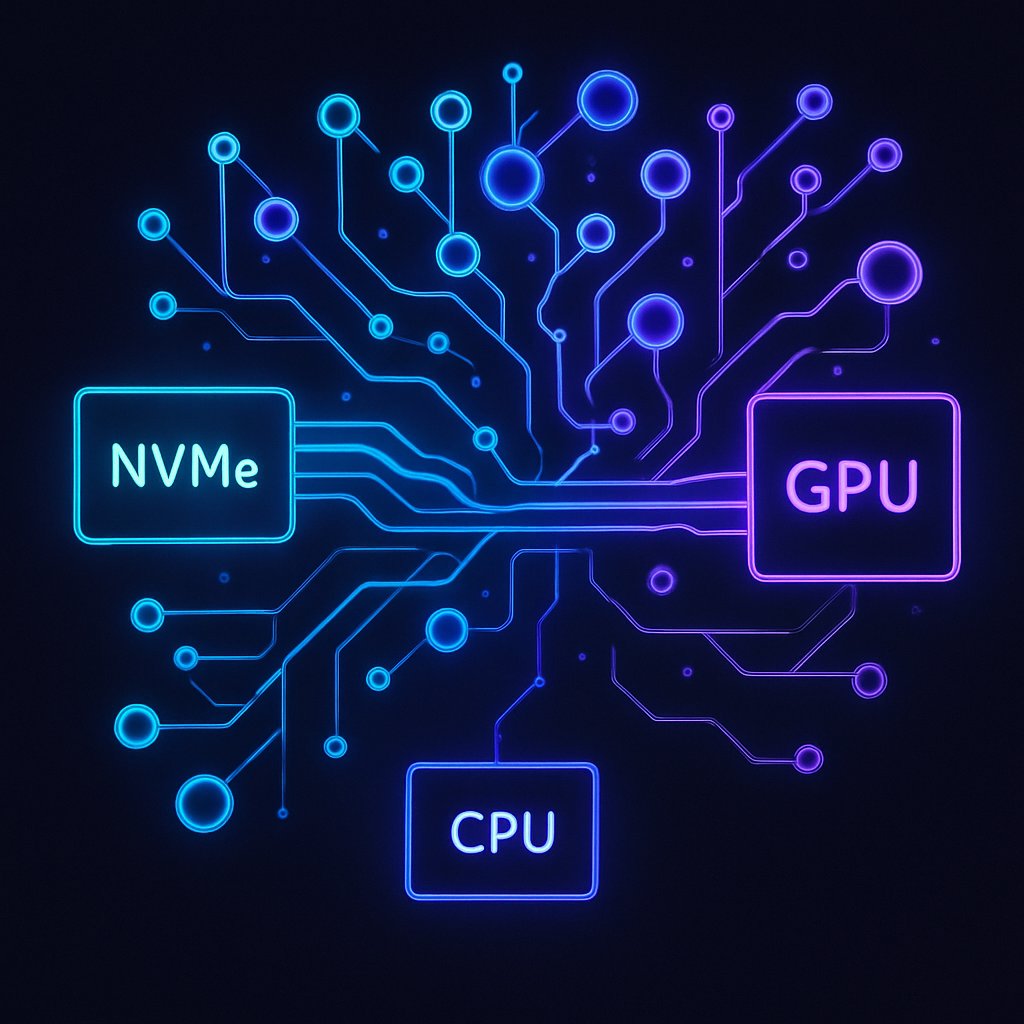

Running Llama 3.1 70B on RTX 3090 via NVMe-to-GPU

Learn how to run Llama 3.1 70B on an RTX 3090 using NVMe-to-GPU technology, bypassing the CPU for efficient local AI inference.

February 22, 2026

6 min read