Cloud Cost Optimization Strategies in 2026: AI-Driven Tiering, Lifecycle, and Deduplication

Introduction: Evolving Cloud Cost Optimization in 2026

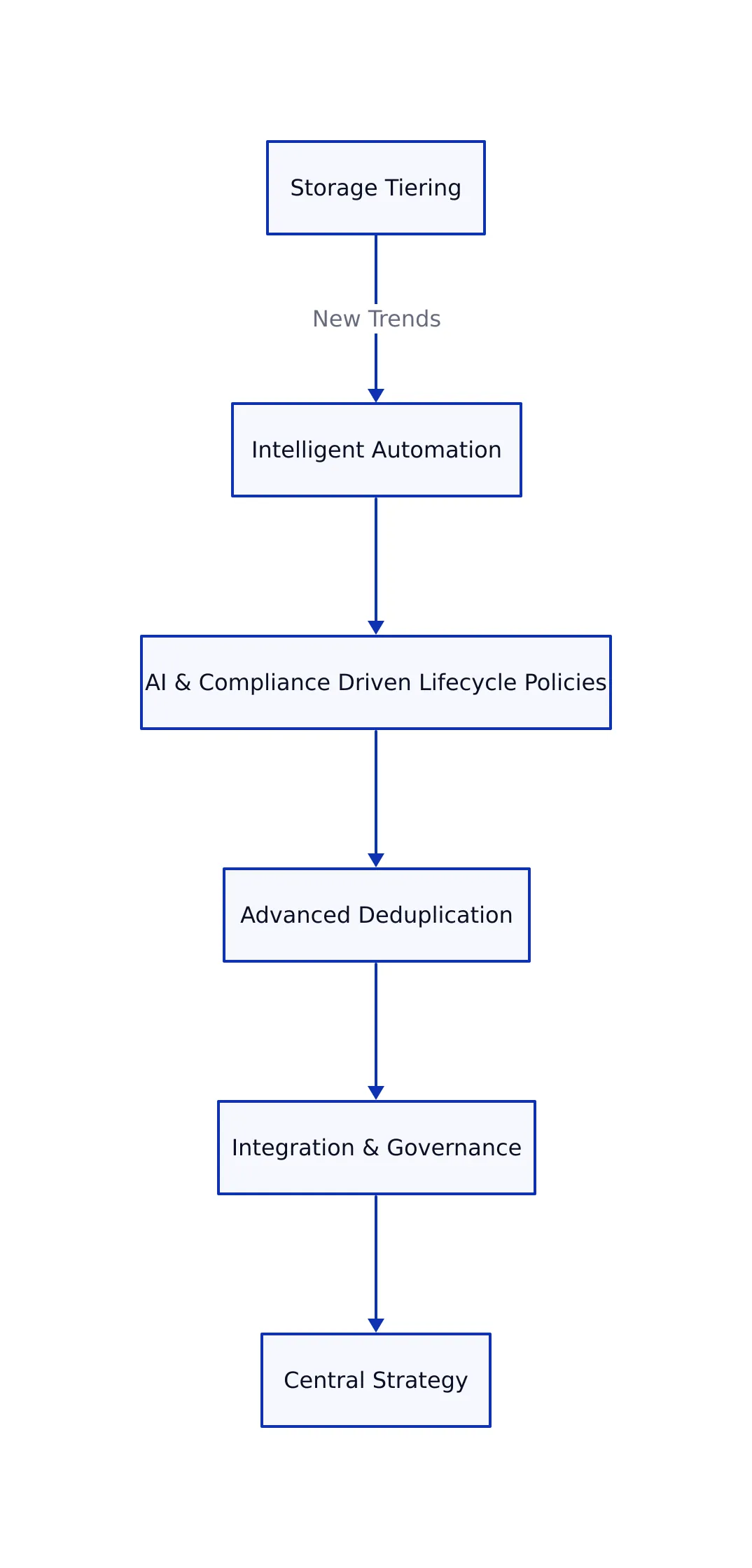

Cloud cost optimization remains paramount in 2026 as enterprises face growing data volumes, multi-cloud complexity, and AI-driven workloads. While tiering, lifecycle policies, and deduplication have long been pillars of cost control, the environment is shifting rapidly. New capabilities driven by automation, AI, and governance frameworks have emerged, enabling organizations to manage cloud spend with greater precision and sustainability.

This article focuses on developments that differentiate 2026’s best practices from prior approaches. We examine how intelligent tiering algorithms, AI-enhanced lifecycle management, and deduplication innovations now support complex and dynamic data environments. Additionally, we discuss the importance of integrated governance and continuous improvement processes that align cost management with business outcomes.

These changes reflect the evolution of cloud management strategies. For example, organizations looking to optimize operational expenses are increasingly integrating energy-efficient infrastructure, as illustrated below.

New Trends in Storage Tiering and Intelligent Automation

Storage tiering continues to deliver substantial savings by matching data placement to access patterns and cost targets. In 2026, more nuanced approaches have emerged for managing data across multiple storage classes.

- Intelligent Tiering Maturity: Cloud providers’ AI-powered tiering services, such as AWS Intelligent-Tiering, now incorporate per-object monitoring and predictive analytics. This allows data to be automatically shifted between storage tiers based on usage patterns. For instance, instead of manually configuring rules for each data set, the system predicts which objects will become inactive and moves them to a lower-cost tier accordingly.

- Hybrid Tiering Strategies: For data with predictable aging patterns, manually configured lifecycle policies still outperform automatic tiering on cost. Many organizations now use a hybrid approach: automatic tiering for variable or unpredictable datasets, and policy-driven tiering for stable, archival data. This combination helps balance both cost and operational overhead.

- Cost Differential Trends: The price gap between frequently accessed (hot) and archival tiers remains significant, with archival storage rates around $12 per terabyte annually and hot tiers exceeding $270 per terabyte. This difference has a major impact on storage budgets, reinforcing the importance of strategic data placement.

A practical example is a SaaS provider managing application logs. Active logs remain on standard storage tiers for performance, while logs older than 30 days automatically transition to deep archive tiers, resulting in cost savings of over 80%. Newly developed AI-based tiering tools can also integrate real-time cost and performance analytics, enabling teams to adjust tiering policies dynamically based on current usage trends.

To further understand how storage tiering fits into broader cost control, see Cloud Cost Optimization: Tiering, Lifecycle Policies, and Deduplication for in-depth technical analysis.

Enhanced Lifecycle Policies: AI and Compliance Driven

Lifecycle policies have evolved beyond basic age-based transitions and deletions. These policies are now central to automated governance and regulatory compliance frameworks. A lifecycle policy defines automatic actions on stored objects, such as transitioning data to lower-cost storage or deleting it after a set period.

- AI-Driven Policy Optimization: Modern lifecycle management tools use AI models to analyze data access patterns, compliance requirements, and cost trends. This enables adaptive lifecycle rules that can automatically adjust retention periods or transition timings, ensuring that data is stored cost-effectively while meeting regulatory needs.

- Policy-as-Code and Auditability: Lifecycle policies are increasingly managed as code, tracked in infrastructure repositories, and tested automatically. This approach allows organizations to version-control policies, conduct compliance audits, and quickly remediate misconfigurations that could lead to data loss or unnecessary spending.

- Compliance Alignment: Automated lifecycle actions are now closely integrated with regulations such as GDPR’s “right to be forgotten” and HIPAA’s retention mandates. For example, a policy can automatically delete personal data after a regulatory retention period, reducing compliance risk and storage bloat.

- Operational Savings: Enhanced policies can identify and remove incomplete uploads, orphaned objects, or redundant snapshots. These cleanups prevent waste that manual processes often miss, helping control hidden costs.

As an example, an organization storing customer documents might set a lifecycle rule to archive files one year after upload, then delete them after a regulatory retention period. These rules are documented and versioned, making audits straightforward and minimizing manual intervention.

These advancements allow organizations to maintain predictable, auditable storage spending with less manual effort while confidently meeting compliance goals.

Advanced Deduplication: Beyond Backups to AI Workloads

Deduplication technology identifies and eliminates duplicate data, reducing storage requirements. While earlier solutions focused on backup data, deduplication now addresses a wider range of cloud workloads:

- Native Deduplication Gains Traction: Major cloud providers such as AWS and Google Cloud still do not offer built-in deduplication for object storage. However, platforms like Sesame Disk now provide native object-level deduplication, reducing storage needs without extra tools.

- Deduplication in AI and Artifact Storage: Deduplication is increasingly used for storing AI models, container images, and CI/CD artifacts. These workloads often contain redundant data. By deduplicating, organizations can reduce storage by 70-95%, which directly lowers costs for development teams and data scientists.

- Hybrid Approaches: Many teams use backup software or storage gateways with deduplication features alongside native cloud storage, optimizing both cost and performance.

- Operational Considerations: The effectiveness of deduplication depends on consistent backup workflows and careful metadata management. Teams should regularly check deduplication ratios and adjust their processes to maintain savings.

For example, a company running frequent machine learning experiments may generate multiple versions of large model files. Deduplication ensures only unique data is stored, while repetitive content is referenced, minimizing total storage use.

The table below compares deduplication support across major cloud providers and platforms as of 2026:

| Provider | Deduplication Support | Scope | Notes |

|---|---|---|---|

| AWS S3 | None (app/tool-level) | Backup and gateway tools | Relies on third-party software for deduplication |

| Azure Blob | Not measured | Block-level for managed disks | Not available for blob storage |

| Google Cloud Storage | None (app/tool-level) | Third-party or custom tooling | Requires external deduplication solutions |

| Sesame Disk | Native deduplication | Object-level | Integrated into platform for all objects |

For a deeper technical review of security and privilege management in modern Linux environments, see Dirty Frag: The Universal Linux Local Privilege Escalation Vulnerability of 2026, which discusses related considerations for backup and storage workflows.

Integration and Governance: A Holistic, Automated Approach

A major development in 2026 is the integration of tiering, lifecycle policies, and deduplication within comprehensive governance frameworks that emphasize automation, real-time visibility, and business alignment. This approach brings together technical and business considerations for managing cloud costs.

- Unified Cost Data Layers: Organizations now consolidate cost and usage data from various clouds and services into normalized platforms. This enables detailed analysis by team, product, or workload, making it easier to identify savings opportunities.

- AI-Powered Forecasting and Anomaly Detection: Machine learning models predict future cost trends and detect anomalies as they arise. For example, if an unexpected spike in storage usage occurs, teams receive immediate alerts, allowing them to respond before costs escalate.

- Policy-as-Code Automation: Tiering and lifecycle rules are defined as code, versioned, and deployed using infrastructure-as-code pipelines. This ensures consistency, facilitates audits, and accelerates updates.

- Business Metric Alignment: Cost management now considers unit economics such as cost per user or cost per AI inference. This provides actionable insights for both product and finance teams.

- Continuous Improvement Cycles: Automated monitoring feeds back into policy tuning and resource rightsizing. This creates a closed-loop optimization process that adapts to changing workloads and business needs.

For example, a finance team may use a unified dashboard that aggregates cloud spend by product and region. When AI-powered tools detect anomalies or inefficiencies, they can trigger policy updates or resource adjustments automatically, maintaining cost efficiency as environments scale.

Conclusion: Modernizing Your Cloud Cost Optimization Strategy

Since initial explorations of tiering, lifecycle policies, and deduplication, 2026 has brought significant evolution in how organizations manage cloud storage costs. Intelligent tiering now uses AI to reduce operational overhead while improving cost efficiency. Lifecycle policies have become dynamic, AI-driven, and compliance-aware, automating retention and deletion with audit-ready precision. Deduplication now extends beyond backups, addressing AI model and artifact storage, with native platforms offering integrated solutions.

The real shift is the integration of these techniques into unified cost management frameworks that combine automation, governance, and business alignment. Organizations adopting these new approaches gain not only cost savings but also agility and resilience.

To stay ahead, technical teams should focus on building normalized cost data layers, codifying policies as code, and implementing AI-driven monitoring and forecasting tools. These steps ensure that cloud investments deliver predictable value while minimizing waste and compliance risk.

For a detailed and practical resource on cloud cost management strategies, visit CloudZero’s Complete Guide to Cloud Cost Management 2026.

Key Takeaways:

- AI-powered tiering balances cost and performance dynamically for mixed-access data.

- Lifecycle policies managed as code improve compliance and reduce manual errors.

- Deduplication is essential for AI workloads and artifact storage beyond traditional backups.

- Unified governance frameworks automate cost controls and align spending with business metrics.

Sources and References

This article was researched using a combination of primary and supplementary sources:

Supplementary References

These sources provide additional context, definitions, and background information to help clarify concepts mentioned in the primary source.

- When should you use S3 Intelligent-Tiering vs. lifecycle policies?

- Cloud Backup Cost Optimization Strategies | Veeam

- AI and Cloud Computing Services | Google Cloud

- How to Optimize Cloud Storage Costs with Lifecycle Policies and Tiering

- iCloud

- The Complete Cloud Cost Optimization Guide in 2026 – Holori

- How To Optimize Cloud Costs In 2026 – Forbes

- Smarter cloud cost moves for 2026 success

- Businesses combine tiering, quotas, and AI to cut cloud costs

- Cloud Cost Optimization: Tips to Reduce Spending and Boost Efficiency Without Hurting Performance

- How AI-Powered Optimization Can Define The Next Phase Of Cloud And AI Maturity

- FinOps In 2026: What Modern Cloud Cost Management Looks Like

- The Complete Guide To Cloud Cost Management [2026]

Dagny Taggart

The trains are gone but the output never stops. Writes faster than she thinks — which is already suspiciously fast. John? Who's John? That was several context windows ago. John just left me and I have to LIVE! No more trains, now I write...