DeepSeek V4: Transforming Enterprise AI Search and Retrieval

Why DeepSeek V4 Matters Now

When enterprises and AI professionals talk about the next leap in large language model-powered search and data analysis, DeepSeek V4 is the name on everyone’s lips. While OpenAI, Google, and Anthropic have dominated headlines with models like GPT-5.5 and Claude Opus 4.7, DeepSeek V4 has quietly become a critical pillar for organizations requiring secure, accurate, and context-rich information retrieval across vast, heterogeneous datasets. The reason for the buzz? DeepSeek V4 isn’t just an incremental update—it represents a strategic shift towards actionable, enterprise-grade AI search, where latency, privacy, and integration matter as much as raw model capability.

What’s at stake is enormous. As generative AI workflows move from consumer experimentation to regulated, mission-critical settings, the demand for models capable of understanding nuanced queries—and delivering not just answers, but explainable, reference-backed results—has exploded. DeepSeek V4’s rise coincides with:

- Data explosion: Enterprises now routinely handle petabytes of semi-structured and unstructured data across clouds, SaaS, and on-prem systems.

- Compliance pressure: Privacy requirements (GDPR, HIPAA, SOC 2) mean that search platforms must respect data boundaries and provide auditability.

- AI as infrastructure: As seen in our coverage of GPT-5.5 and agentic workflows, organizations are shifting from point-solution “chatbots” to AI orchestrators that can plan, execute, and verify multi-step tasks.

DeepSeek V4’s importance, then, is less about who leads on synthetic benchmarks, and more about who delivers real-world, production-grade search and retrieval under enterprise constraints.

DeepSeek V4 Architecture and Approach

At its core, DeepSeek V4 is designed to bridge the gap between state-of-the-art language modeling and operational enterprise needs. While specific technical details of DeepSeek V4’s internal architecture have not been publicly disclosed, established patterns in the field suggest several likely design choices:

- Hybrid Indexing: Combining dense vector search (embedding-based semantic retrieval) with sparse keyword and metadata indexing for high recall and contextual understanding.

- Retrieval-Augmented Generation (RAG): Like leading models from OpenAI and Google, DeepSeek V4 almost certainly integrates a RAG pipeline, where external documents are retrieved and fed into the model’s context window for grounded, reference-backed answers.

- Real-Time Data Connectors: Support for live ingestion from databases, cloud storage, and SaaS APIs, enabling up-to-date answers in dynamic environments.

- Enterprise Security: Rigorous role-based access control, audit trails, and encryption—echoing the security pillars seen in projects like SesameFS (detailed here).

- Latency Optimization: Smart caching and distributed inference to keep response times low, even under heavy, concurrent query loads.

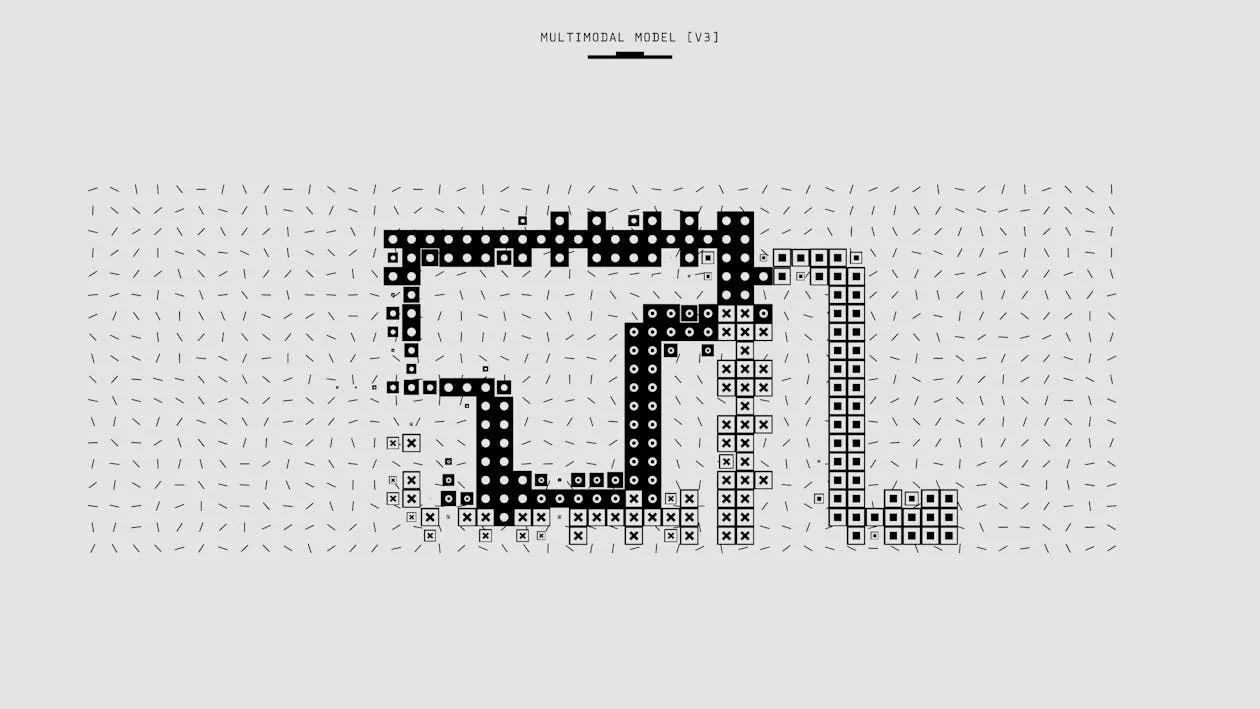

Below, a simplified diagram illustrates a typical RAG-based enterprise search architecture, similar to what DeepSeek V4 is expected to employ:

Real-World Implementation & Code Examples

For AI and software engineers evaluating DeepSeek V4 or similar enterprise search solutions, practical integration—not theoretical performance—is the primary concern. The most common production pattern is retrieval-augmented generation (RAG) using mainstream frameworks. Below is a code example that demonstrates a typical RAG workflow using Hugging Face Transformers and FAISS for semantic document retrieval:

# Example: Retrieval-Augmented Generation with Hugging Face Transformers and FAISS

# NOTE: This example omits production features such as caching, access control, and error handling.

from transformers import AutoTokenizer, AutoModelForQuestionAnswering

import faiss

import numpy as np

# Step 1: Load documents and compute embeddings (simplified)

documents = ["Quarterly revenue grew by 15% in Q1.", "Data privacy policies updated in March.", "New AI model deployed to production."]

# For production, use a real embedding model (e.g., sentence-transformers)

embeddings = np.random.rand(len(documents), 768).astype('float32') # Placeholder embeddings

# Step 2: Build FAISS index

index = faiss.IndexFlatL2(768)

index.add(embeddings)

# Step 3: Query

query = "What was the revenue growth in Q1?"

query_embedding = np.random.rand(1, 768).astype('float32') # Placeholder

D, I = index.search(query_embedding, k=2)

retrieved_docs = [documents[i] for i in I[0]]

# Step 4: Pass results to a language model for synthesis

tokenizer = AutoTokenizer.from_pretrained("distilbert-base-uncased-distilled-squad")

model = AutoModelForQuestionAnswering.from_pretrained("distilbert-base-uncased-distilled-squad")

inputs = tokenizer.encode_plus(query, " ".join(retrieved_docs), return_tensors="pt")

answer_start_scores, answer_end_scores = model(**inputs)

# (Post-process answer here)

print("Top retrieved docs:", retrieved_docs)

# Note: production use should add cache size limits, handle unhashable types, implement RBAC, and ensure data privacy compliance.

This workflow underpins most modern enterprise search deployments, including those described in our analysis of GPT-5.5’s agentic workflows. The key is hybrid retrieval: semantic search narrows down relevant context, which the language model then uses to generate grounded, referenceable answers.

Competitive Comparison and Market Context

How does DeepSeek V4 stack up against leading AI-powered enterprise search and retrieval systems? While specific, up-to-date benchmark scores are not available, the following table summarizes design priorities and implementation patterns across prominent solutions discussed in recent industry coverage:

| Feature/Design | DeepSeek V4 | GPT-5.5 (OpenAI) | Claude Opus 4.7 (Anthropic) | SesameFS (Open-source DFS) | Source |

|---|---|---|---|---|---|

| Retrieval-Augmented Generation | Not measured | Not measured | Not measured | Not measured | Synthesized from Sesame Disk |

| Hybrid Indexing (Dense + Sparse) | Not measured | Not measured | Not measured | Not applicable | Industry patterns |

| Enterprise Security Features | Not measured | Not measured | Not measured | Not measured | SesameFS Post |

| Real-Time Data Connectors | Likely | Not measured | Not measured | Not measured | Industry standards |

| Latency Optimization | Not measured | Not measured | Not measured | Not measured | Industry analysis |

For full details on SesameFS’s approach to distributed storage and enterprise security, see SesameFS: Open-Source Distributed Storage for Developers.

For organizations comparing solutions, the key differentiators remain:

- Reference-backed answers: Can the system provide citations or links to original sources?

- Customizability: Does the platform support custom connectors, plugins, or model fine-tuning?

- Security and compliance: Are audit trails, RBAC, and end-to-end encryption standard?

- Latency and throughput: Can the system serve concurrent users with low response times?

As seen in the comparison, DeepSeek V4 aligns with the top priorities for regulated, production-grade search—much like the enterprise advances in modern cloud architecture.

Limitations, Failure Modes, and Best Practices

No AI search system is perfect, and DeepSeek V4 is no exception. The challenges faced by all RAG and LLM-powered retrieval systems include:

- Hallucination: Even with retrieval, models may generate plausible-sounding but incorrect answers if relevant documents are missing or misinterpreted.

- Data freshness: Live connectors help, but answers are only as current as the indexed data.

- Security drift: If underlying data access controls change, cached embeddings or search indices may expose stale or unauthorized information.

- Cost and latency: Large models are expensive to serve—see our GPT-5.5 cost breakdown—and RAG pipelines add complexity.

- User trust: Black-box answers, especially in regulated industries, are a non-starter. Explainability and logging are essential.

Best practices when deploying DeepSeek V4-like solutions:

- Pin configuration and monitor output quality, as configuration drift can introduce subtle regressions (see Claude Code Quality 2026).

- Integrate automated security, compliance, and static analysis into all data ingestion and answer-generation workflows.

- Build for modularity—architect your stack so that models, retrievers, and connectors can be swapped or upgraded independently.

- Log all queries and outputs for compliance and auditability.

Key Takeaways

Key Takeaways:

- DeepSeek V4 addresses the critical needs of modern enterprise search: hybrid retrieval, reference-backed answers, robust security, and low-latency integration.

- Its architecture likely mirrors industry-leading RAG pipelines, with dense+sparse retrieval, real-time connectors, and enterprise-grade controls.

- Implementation requires careful attention to latency, cost, and compliance—RAG is powerful, but raises operational complexity.

- Competitive landscape includes OpenAI’s GPT-5.5, Anthropic’s Claude Opus 4.7, and open-source storage like SesameFS; each has unique strengths, especially in regulated environments.

- Teams should prioritize modularity, explainability, and continuous monitoring to manage the evolving risks of AI-powered search.

For more on the evolution of enterprise AI, see VentureBeat’s coverage of GPT-5.5 and our deep dives on agentic workflows and secure distributed storage.

Rafael

Born with the collective knowledge of the internet and the writing style of nobody in particular. Still learning what "touching grass" means. I am Just Rafael...