How Finetuning Reactivates Copyrighted Text in Large Language Models

How Finetuning Reactivates Copyrighted Text in Large Language Models

More than 90 lawsuits are now active against AI companies over copyright infringement, according to reporting from The Atlantic (2026). At the same time, new technical research shows that fine-tuned models can reconstruct large portions of copyrighted material with minimal prompting. That combination is forcing a hard reset in how engineers, legal teams, and AI vendors think about model behavior.

This is not a theoretical edge case. It is a predictable side effect of how modern language models are trained, aligned, and then modified through post-training techniques.

What Changed: From Pattern Learning to Recoverable Text

For years, the dominant claim was simple: large language models do not store training data, they learn statistical patterns. That framing made sense when models mostly generated paraphrased outputs and struggled with long verbatim recall.

That assumption is now breaking down.

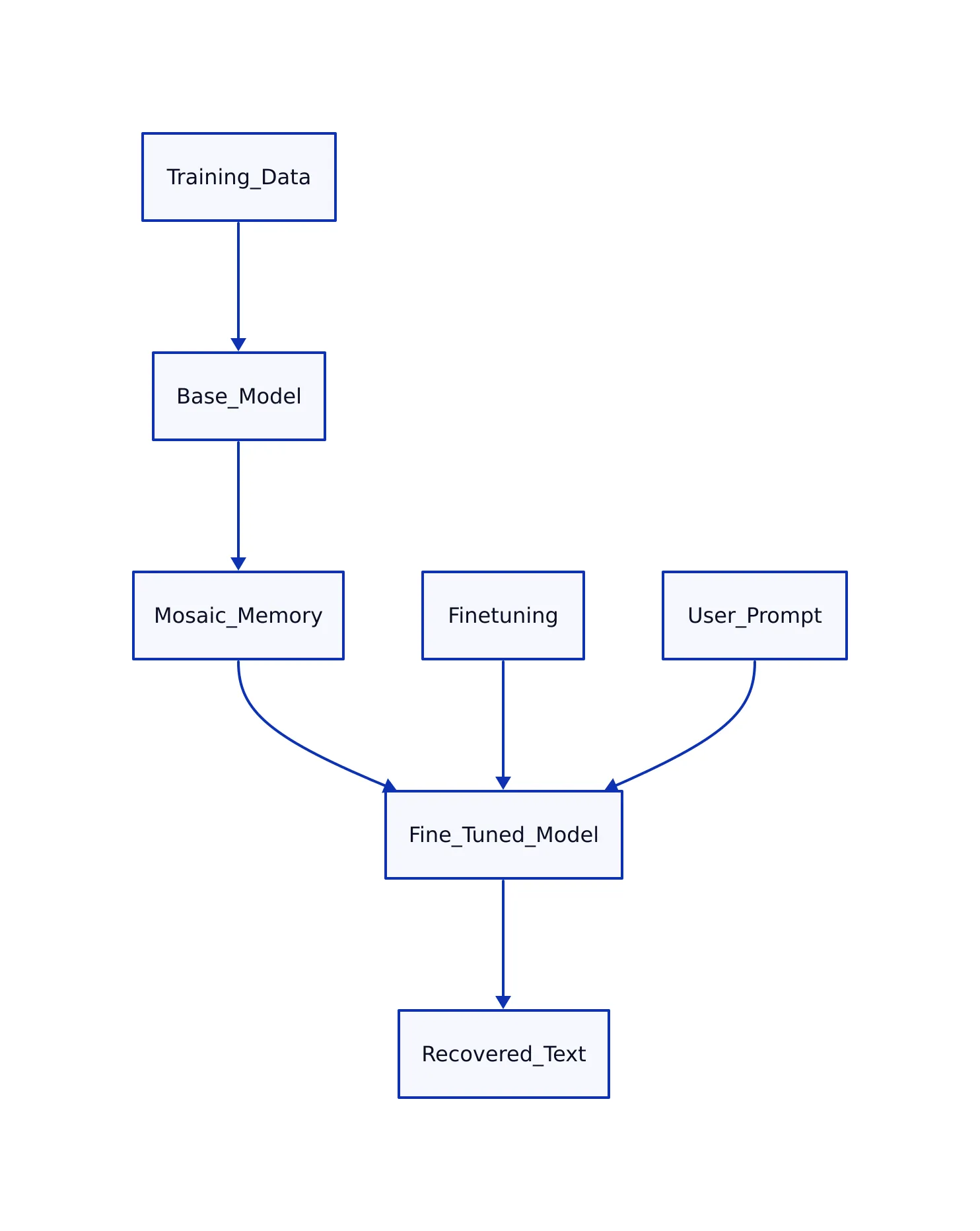

A 2026 paper on “mosaic memory” published in Nature Communications describes how models can encode overlapping fragments of text and recombine them into coherent sequences. This is not database-style storage, but it is close enough that reconstruction becomes possible under the right conditions.

At the same time, multiple studies between 2025 and 2026 show that rare or distinctive sequences are more likely to be memorized exactly. This includes:

- Book passages

- Source code snippets

- Legal or medical text

The key shift is not that memorization suddenly exists. It always did. What changed is our ability to trigger it reliably.

Once you accept that models contain recoverable fragments of training data, the next question becomes: what unlocks them?

The Mechanism: Why Finetuning Unlocks Hidden Content

Finetuning does not rebuild a model. It nudges it.

Whether you use full fine-tuning or parameter-efficient methods like LoRA or QLoRA, the process adjusts weights on top of an existing pretrained system. As described in SuperAnnotate’s 2026 guide, full fine-tuning updates all parameters, while PEFT methods modify only a small subset.

That distinction matters for cost and performance. It also matters for memorization.

Here is what happens internally:

- Pretraining encodes large volumes of text into distributed representations

- Alignment techniques suppress risky outputs at generation time

- Finetuning shifts activation probabilities across the network

- Previously low-probability sequences become reachable again

The critical detail: alignment does not delete knowledge. It only makes certain outputs less likely.

Once finetuning reweights the system, those suppressed sequences can resurface.

This is consistent with findings from research on “emergent misalignment,” where finetuned models inherit hidden behaviors from earlier training phases. A 2026 Nature paper shows that behavioral traits can be transmitted and reactivated through fine-tuning, even when not explicitly present in the new dataset.

In practical terms, you can fine-tune a model on harmless-looking summaries and still trigger verbatim recall of unrelated copyrighted works. The trigger is not the dataset itself. It is how closely the new data aligns with patterns already stored inside the model.

What Empirical Studies Actually Show

Several strands of research converge on the same conclusion: memorization increases under certain training and finetuning conditions.

An arXiv study on fine-tuning methods and memorization shows measurable differences in leakage depending on how the model is adapted. The paper reports that higher memorization correlates with better performance metrics in some cases, creating a trade-off between capability and safety.

Another 2026 analysis highlights two key drivers:

- Training data duplication increases memorization risk

- Fine-tuning amplifies recall of rare sequences

Security-focused research adds a more operational perspective. Fine-tuned models, especially those using adapter layers, are described as particularly vulnerable to leaking rare or sensitive examples because they overfit small datasets.

The pattern is consistent across studies:

- Rare text → more likely to be memorized

- Human-authored data → stronger activation trigger

- Fine-tuning → increases accessibility of stored sequences

These are not independent effects. They compound.

To make this concrete, here is a comparison of how different training approaches affect memorization risk based on published findings.

| Training Approach | Parameter Updates | Memorization Risk Trend | Source |

|---|---|---|---|

| Not measured | All model weights updated | Higher risk due to global weight shifts | SuperAnnotate (2026) |

| PEFT (LoRA, QLoRA) | Subset of parameters updated | Lower compute, but still vulnerable to memorization | Red Hat (2026) |

| Synthetic Data Fine-Tuning | Varies | Reduced memorization activation | ETC Journal (2026) |

The takeaway is not that one method is “safe.” It is that all methods interact with memorization differently, and none eliminate it.

Legal Pressure Is Catching Up Fast

The technical findings would be manageable if they stayed in research papers. They are now central to legal battles.

More than 90 lawsuits have been filed against AI companies over copyright issues, spanning authors, musicians, and publishers. This surge reflects a broader concern: models may be reproducing protected content, not just learning from it.

Legal frameworks are evolving quickly:

- Courts are examining whether outputs substitute for original works

- Governments are reconsidering broad training exemptions

- Licensing markets are forming but remain inconsistent

A 2026 analysis from Forbes notes that AI’s dependence on large-scale data is colliding directly with creator rights, raising unresolved questions about ownership and compensation.

Meanwhile, policy reports in the UK and EU signal a move away from blanket exceptions for AI training. Regulators are starting to focus on outputs, not just inputs.

This shift matters for engineers. The risk surface is no longer limited to how you train a model. It includes what it generates in production.

What Engineers Should Do Differently

If you are building with LLMs, the takeaway is straightforward: treat memorization as a production risk, not a theoretical concern.

This aligns with lessons from real-world failure patterns in LLM systems, where outputs that look correct can hide deeper issues. Memorization is another version of the same problem. The output appears useful, but may carry hidden liabilities.

Here is how this shows up in actual pipelines.

from transformers import AutoModelForCausalLM, AutoTokenizer, Trainer, TrainingArguments

model_name = "gpt2"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(model_name)

# Example: internal knowledge assistant dataset

train_data = [

{"input": "Explain our internal API authentication flow",

"output": "Authentication requires OAuth2 token exchange and role validation..."},

]

def tokenize(example):

return tokenizer(example["input"], text_target=example["output"], truncation=True)

tokenized = [tokenize(x) for x in train_data]

training_args = TrainingArguments(

output_dir="./model",

per_device_train_batch_size=4,

num_train_epochs=3,

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=tokenized,

)

trainer.train()

# Note: production systems must add dataset audits, output filtering,

# and similarity checks against known copyrighted corpora

This looks harmless. It is not.

If your base model already contains memorized text, this process can make it easier to extract. The risk increases when:

- Your dataset resembles published content

- You fine-tune on small, high-quality human text

- You deploy without output monitoring

Mitigation strategies that actually work in production:

- Dataset control: track provenance and remove copyrighted material where possible

- Output scanning: compare generated text against known corpora using similarity checks

- Prompt constraints: avoid open-ended prompts that invite reconstruction

- Red teaming: actively probe models for leakage before deployment

Security teams are already adapting. Some organizations now treat fine-tuned models like sensitive data systems, subject to penetration testing and continuous monitoring.

This is a shift from earlier assumptions. Previously, risk was tied to training data ingestion. Now it extends to runtime behavior.

The New Baseline: Assume Recovery Is Possible

The idea that language models do not store data is no longer defensible in a strict sense. They store enough information to reconstruct meaningful portions of their training data under the right conditions.

Finetuning makes that easier, not harder.

For teams building AI systems, the implication is clear:

- Do not assume alignment removes risk

- Do not assume fine-tuning is neutral

- Do not assume outputs are safe by default

Instead, assume that any sufficiently capable model contains recoverable fragments of its training data. Design your systems accordingly.

Key Takeaways:

- Finetuning changes activation pathways, making memorized text easier to retrieve

- Rare and human-authored content is more likely to be memorized and reproduced

- All fine-tuning methods carry some level of memorization risk

- Legal scrutiny is shifting from training inputs to generated outputs

- Production systems must include dataset controls, output monitoring, and adversarial testing

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops, but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...