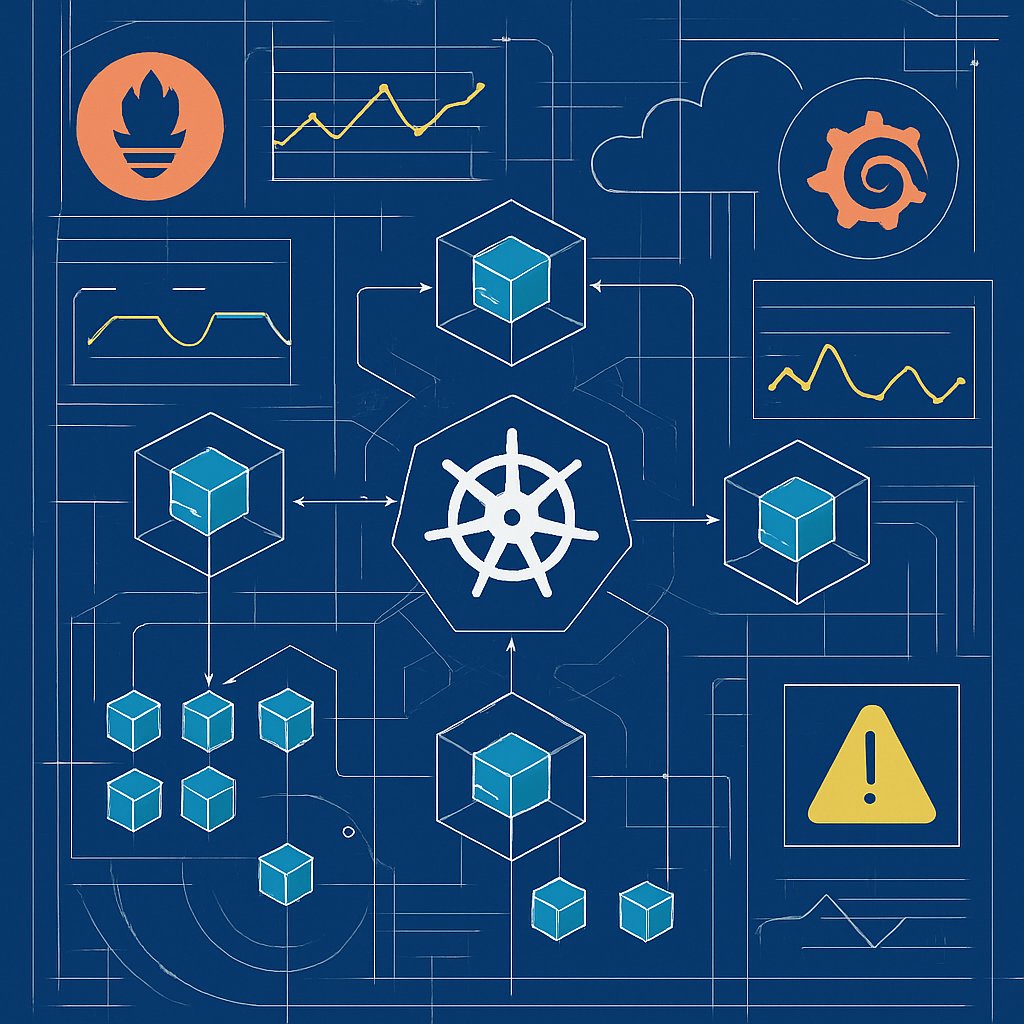

Prometheus and Grafana: Monitoring Kubernetes Clusters

Learn how to monitor Kubernetes clusters effectively using Prometheus and Grafana, with actionable insights and production-ready configurations.

February 24, 2026

10 min read