Zig’s AI Ban Highlights Mentorship in Open Source Development

Why Zig’s AI Ban Is a Flashpoint in 2026

Only a handful of major open source projects have taken a hard stance against AI-generated contributions. A March 2026 survey of 112 projects found that just four explicitly banned them outright, including Zig, NetBSD, GIMP, and QEMU (source). That puts Zig in a tiny minority at the exact moment AI coding tools are becoming default in developer workflows.

This contrast is what makes the policy worth studying. Most teams are trying to integrate AI faster. Zig is doing the opposite by drawing a strict boundary around where automation stops and human responsibility begins.

The decision also lands during a surge in AI-assisted development. Tools embedded in editors and CI pipelines are now common, and industry reporting shows widespread adoption alongside concerns about “AI slop” and review overhead (TechCrunch). Zig is reacting directly to that pressure.

The key point is not whether AI can generate working code. It often can. The question Zig is asking is different: what happens to a project when contributions no longer reflect the contributor’s actual understanding?

What Zig Actually Prohibits

The policy is unusually explicit. According to Simon Willison’s write-up, Zig bans the use of large language models in:

- Pull request creation

- Issue submissions and discussions

- Code review assistance

- Community trust-building activities

This is not a soft guideline like “disclose AI usage.” It is a hard prohibition. Contributions that rely on LLM output are not accepted.

The reasoning is tied to what Zig calls “contributor poker.” Maintainers are not evaluating code in isolation. They are evaluating people. A weak first contribution from a human is still valuable because it establishes a relationship and creates a path for growth. An AI-generated patch, even if correct, does not.

The Core Idea: Mentorship as Infrastructure

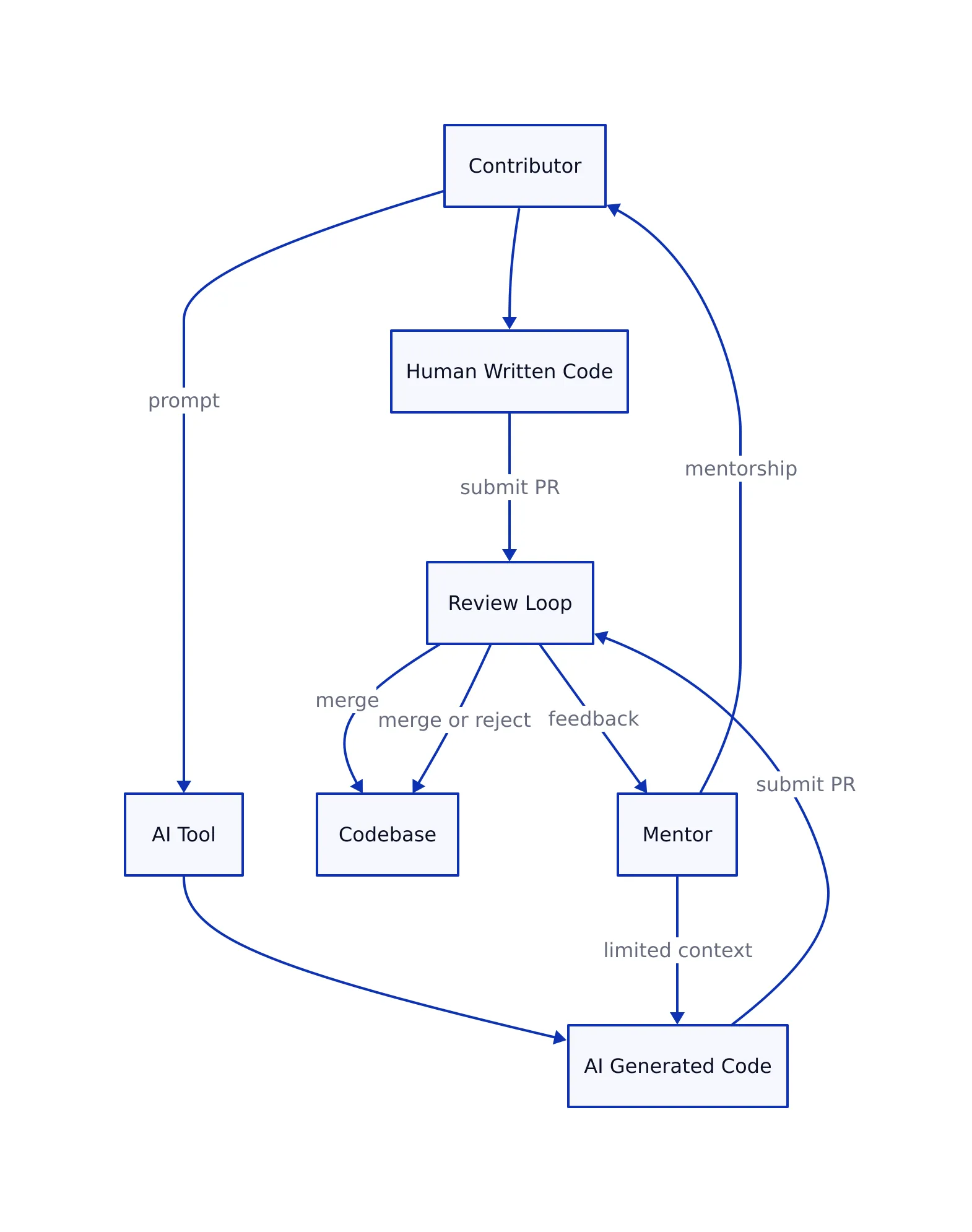

Zig’s position only makes sense if you treat mentorship as a core system component, not a side effect. Most projects optimize for throughput: more pull requests, faster merges, more features shipped. Zig optimizes for contributor development.

This trade-off shows up in how maintainers spend time. In a typical project:

- Review time is spent validating correctness

- Incomplete PRs are often rejected quickly

- Throughput is the primary metric

In Zig’s model:

- Review time is an investment in the contributor

- Imperfect work is expected and refined collaboratively

- Long-term contributor quality matters more than short-term output

This approach addresses a known scaling issue in open source. As projects grow, the number of incoming contributions often exceeds maintainer capacity. Many projects respond by raising contribution standards. Zig responds by doubling down on mentorship, even if it slows progress.

That choice only works if contributions reflect genuine understanding. Once AI enters the loop, that assumption breaks.

Where AI Contributions Clash with That Model

AI-assisted contributions change the economics of code review in subtle but important ways.

First, they weaken the feedback loop. If a contributor submits code they did not fully write or understand, review comments do not translate into skill growth. The maintainer’s time no longer compounds.

Second, they increase review cost. Generated code often looks correct but can contain hidden issues. As explored in our breakdown of LLM-generated bugs, these issues are frequently non-obvious and require deeper inspection than human-written code.

Third, they dilute accountability. When code originates from a model, it becomes harder to answer a basic question: who owns this logic?

This creates a mismatch between effort and outcome:

- Maintainer spends 30 minutes reviewing

- Contributor gains little understanding

- Future contributions do not improve

Over time, that dynamic can exhaust maintainers without strengthening the contributor base.

How Developer Workflows Change in Practice

If you are used to AI-assisted development, contributing to Zig requires a reset. The workflow shifts from speed to comprehension.

Here is what a common AI-heavy workflow looks like today:

# AI-assisted patch workflow

prompt = "Fix race condition in async queue handling"

patch = llm.generate(prompt)

# minimal validation

submit_pull_request(patch)

# Note: production systems should include:

# - concurrency testing

# - performance benchmarks

# - edge case validation

# These are often skipped in AI-first workflows

This pattern is efficient but fragile. It assumes the generated code is correct and that review will catch any issues.

In Zig, the expected workflow looks more like this:

- Reproduce the bug locally

- Trace the root cause through the codebase

- Write or adapt a fix manually

- Discuss the approach with maintainers

This takes longer per contribution. But it produces contributors who improve over time instead of plateauing.

This distinction mirrors a broader lesson from production systems: speed without understanding often leads to hidden failures. As discussed in real-world LLM integration case studies, code that compiles and passes tests can still fail badly under real workloads.

What the Rest of the Industry Is Doing

Zig’s stance is unusual because the industry is moving in the opposite direction.

AI coding tools are now embedded across the stack:

- Editors generate code in real time

- Platforms automate documentation and tests

- Review tools suggest fixes automatically

Adoption is widespread, but the results are mixed. Industry reporting shows that while AI can increase output, it also introduces new problems. Open source maintainers report increased review burden and lower-quality submissions when AI use is uncontrolled (source).

Other projects are experimenting with middle-ground policies:

- Mandatory disclosure of AI usage

- Restrictions on generated code in critical paths

- Selective acceptance with stricter review

Zig skips all of that and chooses a clean boundary: no AI in contributions at all.

This makes it an outlier, but also a useful case study. It shows what happens when a project optimizes entirely for human development instead of tool-assisted speed.

Human vs AI Contribution Models

| Dimension | Human-Centric Model (Zig) | AI-Assisted Model | Source |

|---|---|---|---|

| Primary goal | Contributor growth and trust | Higher output and faster delivery | Willison (2026) |

| Review focus | Mentorship and understanding | Validation and correctness checks | TechCrunch (2026) |

| Scaling behavior | Slower but builds stronger contributors | Faster but increases review load | Consensus (2026) |

| Failure mode | Not measured | Hidden bugs and shallow understanding | Sesame Disk analysis |

What to Watch Next

Zig’s policy raises a question that will not go away: what is the purpose of open source contribution in an AI-heavy world?

There are a few scenarios to watch:

- More projects adopt partial restrictions instead of full bans

- Tooling evolves to prove contributor understanding, not just output

- Maintainers introduce stricter review gates for AI-generated code

There is also a practical limit to Zig’s approach. If AI tools continue improving, pressure to relax the policy will increase. Some community discussions already suggest that a permanent ban may be hard to sustain as tooling becomes ubiquitous.

Still, Zig has done something important. It forced a clear articulation of what many projects only feel implicitly: that open source is not just about code, but about people learning, collaborating, and building trust over time.

Key Takeaways:

- Zig is one of very few major projects to fully ban AI-generated contributions

- The policy is about preserving mentorship and contributor growth, not rejecting automation outright

- AI-assisted workflows increase speed but can weaken learning and accountability

- Open source projects are beginning to diverge between human-first and AI-first models

- The long-term balance between these approaches is still unresolved

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops, but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...