Building Custom Coding Agents with MCP Servers

Introduction to Custom Coding Agents

A single design decision separates teams building useful AI coding agents from those stuck with demo-grade tools: whether the agent can actually use developer tools or just generate text.

In previous analysis of AI code review agents, the biggest gap was clear. Accuracy ranged from 6 percent to over 80 percent depending on how much real context and tooling the system had. Pure prompt-based systems plateau quickly, while tool-integrated agents keep improving.

That is where Model Context Protocol (MCP) comes in. MCP gives you a standard way to connect a large language model (LLM) to real developer tools such as:

- Code search

- Test runners

- Linters

- Databases

- CI pipelines

Instead of guessing, the agent can ask, execute, observe, and iterate. This guide walks through building a real coding agent that:

- Reads code from a repository

- Runs tests

- Interprets failures

- Proposes fixes

And does it using MCP-style tool integration.

Practical example: Imagine an agent that receives a failing test suite. Without MCP, the agent could only speculate about the cause. With MCP, it can actually rerun the tests, retrieve the real output, and suggest fixes grounded in the actual codebase.

Modern coding agents rely on real tool execution, not just text generation.

How MCP Servers Connect Tools

MCP is a protocol that standardizes how agents talk to developer tools. Instead of hardcoding integrations, you expose tools through a server, and the agent discovers and calls them dynamically.

Microsoft and others are already bringing MCP-based infrastructure into developer workflows. For example, the VS Code AI Toolkit can scaffold MCP servers with built-in transport and tool registration logic, making it easier to plug agents into real workflows (source).

At a high level, the architecture includes these components:

- Agent: decides what to do next

- MCP server: routes tool calls

- Tools: execute real actions (tests, lint, queries)

- Repository: source of truth for code

Example: An agent tasked with fixing a bug could use the MCP server to call a registered test runner tool, get structured test output, and decide how to adjust the code.

Why this matters in practice

Without MCP:

- The agent guesses test outcomes

- Cannot verify fixes

- Produces inconsistent results

With MCP:

- Runs actual tests

- Gets structured output

- Iterates based on real feedback

This directly addresses the “false positive” problem highlighted in automated code review systems. When the agent is grounded in real execution, it produces less noise.

Building Working Coding Agent

Let’s build a minimal but realistic agent loop.

This version assumes:

- A repository on disk

- A test runner (pytest)

- A simple MCP-style tool interface

Start with the core loop.

import subprocess

from anthropic import Anthropic

client = Anthropic(api_key="your-api-key")

def read_file(path):

with open(path, "r") as f:

return f.read()

def run_tests():

result = subprocess.run(

["pytest", "-q"],

capture_output=True,

text=True

)

return result.stdout

def ask_llm(prompt):

response = client.messages.create(

model="claude-2",

max_tokens=500,

messages=[{"role": "user", "content": prompt}]

)

return response.content[0].text

def agent_loop():

code = read_file("app/math_utils.py")

test_output = run_tests()

prompt = f"""

You are coding agent.

Code:

{code}

Test results:

{test_output}

1. Identify failing issue

2. Suggest fix

3. Output corrected code

"""

suggestion = ask_llm(prompt)

print(suggestion)

if __name__ == "__main__":

agent_loop()

# Expected output (example):

# "The failure is due to incorrect subtraction logic. Replace fn with ..."

# Note: prod use should add sandboxing and timeout handling

This is the simplest usable agent:

- Reads real code

- Executes tests

- Feeds results into the model

- Gets a fix

Example in action: If a developer introduces a bug in math_utils.py, this agent will run the tests, see which ones fail, and ask the LLM to suggest corrections based on the actual test output.

But it is still tightly coupled. The next step is separating tools via MCP.

Python and TypeScript impls

To make the agent more flexible, you can abstract tool integrations using MCP concepts.

Python: MCP-style tool abstraction

Instead of calling functions directly, define tools with structured inputs and outputs.

class MCPTool:

def __init__(self, name, func):

self.name = name

self.func = func

def call(self, args):

return self.func(**args)

def run_tests_tool():

result = subprocess.run(

["pytest", "-q"],

capture_output=True,

text=True

)

return {"output": result.stdout}

tools = {

"run_tests": MCPTool("run_tests", lambda: run_tests_tool())

}

def call_tool(tool_name):

return tools[tool_name].call({})

# Agent using tool

test_result = call_tool("run_tests")

print(test_result["output"])

# Output:

# "1 failed, 3 passed"

# Note: prod systems should validate tool inputs and restrict execution scope

This is the core MCP idea:

- Tools are registered in a registry

- The agent calls them by name

- Responses are structured, making it easier to interpret results programmatically

In production MCP servers, tool invocation happens over HTTP or RPC rather than direct function calls, allowing for language-agnostic and distributed setups.

TypeScript: same pattern with Anthropic SDK

import { Anthropic } from "anthropic";

import { execSync } from "child_process";

const client = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

function runTests(): string {

return execSync("pytest -q").toString();

}

async function agent() {

const testOutput = runTests();

const response = await client.messages.create({

model: "claude-2",

max_tokens: 500,

messages: [

{

role: "user",

content: `Test results:\n${testOutput}\nSuggest fix.`,

},

],

});

console.log(response.content[0].text);

}

agent();

The Python and TypeScript versions follow the same pattern:

- Execute a tool

- Feed the results to the agent

- Generate a fix based on output

The difference in production systems is orchestration.

Example: By abstracting tool calls, you can swap out the test runner or add new capabilities without rewriting core agent logic.

Where MCP changes design

Instead of embedding tools locally:

- Tools live behind an MCP server

- The agent discovers available tools dynamically

- Multiple agents can share the same tools

This becomes important once you scale beyond a single repository or need to support multiple agents collaborating on larger codebases.

prod Considerations and Tradeoffs

This is where most teams fail.

Building a working agent is easy. Making it reliable is more challenging.

Transition: As agents move from prototypes to production, new concerns such as security, tool quality, and scalability become critical. Below are the main considerations teams encounter.

1. Security risks are real

MCP servers expose powerful capabilities:

- Running code

- Accessing repositories

- Querying internal systems

Poor configuration can lead to remote code execution risks, which have already been observed in MCP-style systems (source).

Practical safeguards include:

- Running tools in sandboxes

- Restricting file system access

- Enforcing allowlists for commands

- Logging every tool invocation

Example: Running the test runner inside a Docker container with read-only filesystem access limits the risk if the tool is compromised.

2. Tool quality determines agent quality

From earlier code review analysis:

- High false positives kill adoption

- Context matters more than model size

The same applies here:

Bad tool integration:

- Noisy suggestions

- Broken fixes

- Developer distrust

Good tool integration:

- Deterministic signals (tests, lint)

- Context-aware fixes

- Lower hallucination rate

This matches practices discussed in prompt engineering for code generation, where constraints and validation dramatically improve output quality.

Example: Integrating a static type checker as a tool can help catch errors before they reach production, increasing trust in the agent’s suggestions.

3. MCP vs direct integration

| Approach | How it works | Pros | Cons |

|---|---|---|---|

| Direct tool calls | Agent calls local functions | Simple, fast to build | Tightly coupled, hard to scale |

| MCP server | Tools exposed via protocol | Reusable, multi-agent support | More infrastructure, security overhead |

| Cloud-hosted MCP | Managed server (e.g. Foundry MCP) | Scales easily across teams | Less control, requires governance |

Cloud-hosted implementations are emerging. Microsoft’s Foundry MCP server provides a hosted environment for connecting agents to tools and data sources (source).

Example: A team can deploy an MCP server once, register all their tools, and allow multiple agents or services to use them for different automation tasks.

4. Multi-agent workflows are next step

Single agents break down on complex tasks.

Production systems are moving toward:

- Code reader agent

- Test executor agent

- Fix generator agent

Each specializes and communicates through shared context.

This is part of the shift from simple retrieval-augmented generation (RAG) systems toward tool-driven, multi-agent architectures seen in enterprise MCP deployments.

Example: In a large refactoring, one agent can analyze code dependencies, another runs tests, and a third proposes fixes, coordinating through the MCP layer.

5. Observability and debugging

Agents fail in non-obvious ways.

You need:

- Tool call logs

- Input/output tracing

- Replay capability

Otherwise debugging becomes guesswork.

Example: If an agent’s code suggestion introduces new errors, being able to trace which tool calls and inputs led to the suggestion is essential for effective troubleshooting.

Conclusion and Next Steps

Custom coding agents are moving from novelty to infrastructure. The difference between toy and production systems comes down to one factor: tool use.

MCP provides the missing layer:

- Standardized tool access

- Decoupled architecture

- Scalable agent workflows

The practical path forward:

- Start with a local agent loop (like above)

- Add structured tools

- Move tools behind an MCP server

- Introduce sandboxing and logging

- Expand into multi-agent workflows

Teams that treat these systems like production infrastructure, not demos, will see gains observed in code review automation: higher signal, fewer false positives, and real productivity improvements.

Key Takeaways:

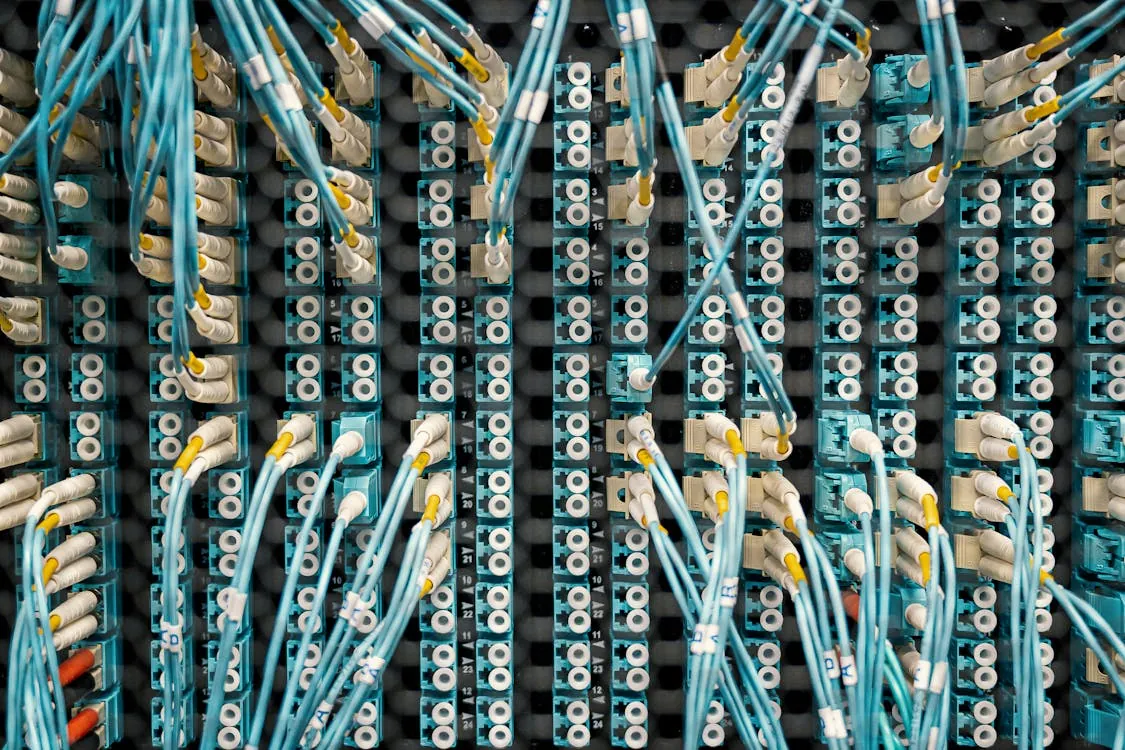

The image shows a close-up view of organized server or data center infrastructure, with numerous interconnected blue cables and white connectors attached to a structured panel. It highlights networking or electrical components, making it suitable for articles related to technology, data management, or network infrastructure.

- Tool use is the main factor that separates useful coding agents from prompt-only systems.

- MCP standardizes how agents connect to tools like test runners and linters.

- A working agent must execute real code, not just generate suggestions.

- Security and sandboxing are critical when exposing tools via MCP servers.

- Multi-agent architectures are becoming the default for complex coding workflows.

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops, but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...