Specification-Driven Enforcement in 2026: From Pattern to Pipeline Control

Specification-Driven Enforcement in 2026: From Pattern to Pipeline Control

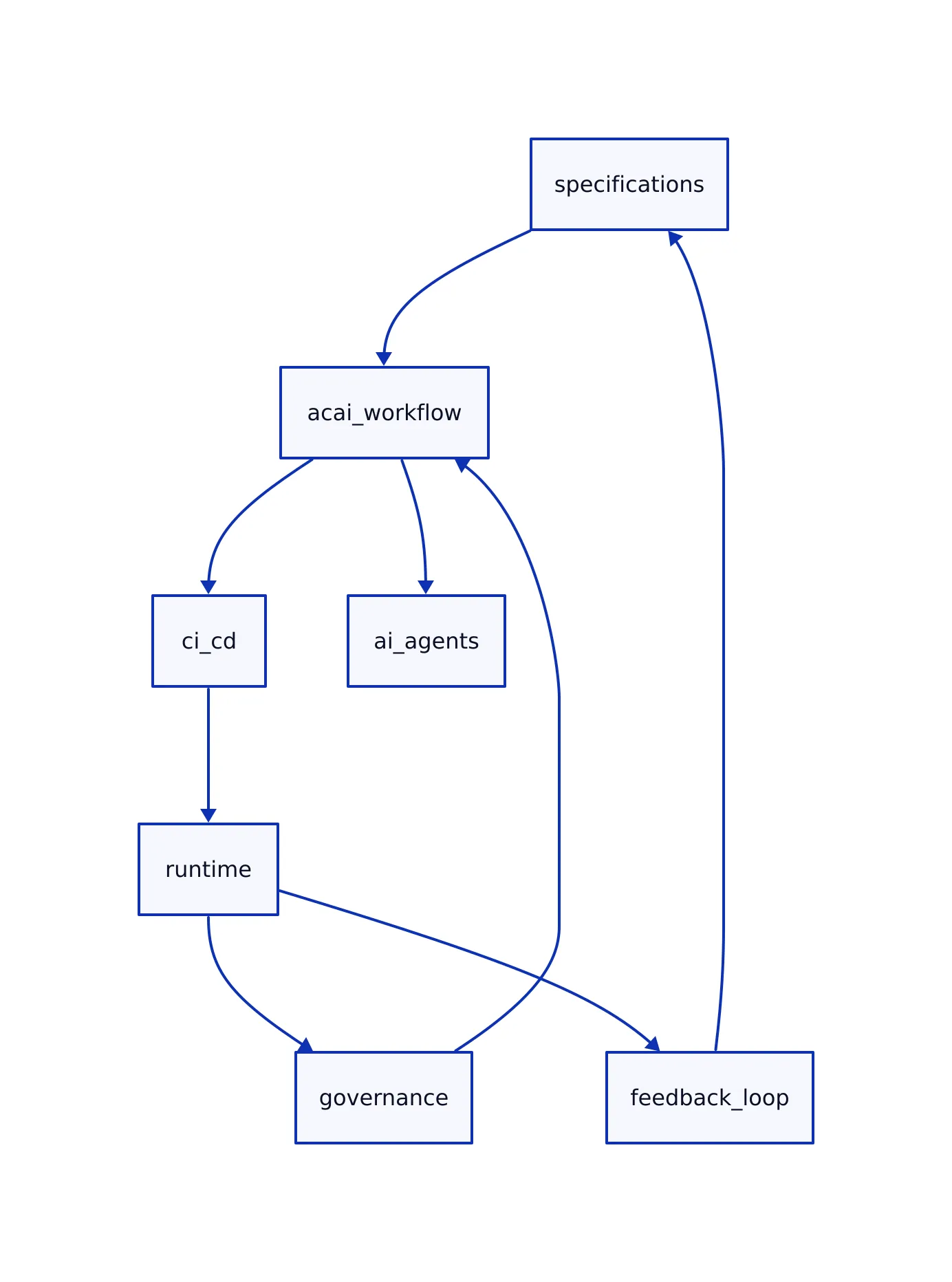

A week ago, we framed specification-driven enforcement as a shift into the critical path. That still holds, but a more interesting change is this: it has become more than a design philosophy. It is becoming executable infrastructure. Teams are now wiring specifications directly into CI/CD (Continuous Integration/Continuous Deployment) pipelines, agent workflows, and runtime validation loops.

Two forces are driving this change. First, AI systems are increasingly autonomous, which raises the cost of ambiguity. Second, governance expectations are tightening. The Stanford 2026 AI Index coverage highlights a widening gap between rapid AI deployment and the slower pace of governance frameworks. That gap is exactly where specification-driven approaches are gaining traction. For a deeper exploration of specification enforcement in AI, see Specification-Driven Enforcement Moves into the Critical Path in AI and Software Systems.

acai.sh: How It Actually Works

acai.sh sits at the center of the specsmaxxing idea. It is a development workflow layer that forces teams to define behavior before writing code. acai.sh is not a model, not a framework, and not a compliance tool. It is a development workflow layer that forces teams to define behavior before writing code.

The core idea is simple: write structured YAML (YAML Ain’t Markup Language) specifications, assign each requirement an ID, and reference those IDs everywhere. That ID becomes the unit of truth across code, logs, agents, and audits.

Installation

npm i --save-dev @acai.sh/cli

Alternative package managers are supported, including bun, yarn, pnpm, or direct binary download from GitHub. Teams then register at app.acai.sh and store the API token in their environment:

ACAI_API_TOKEN=your_token_here

Specification Example

feature:

name: user-auth

product: enterprise-api

components:

LOGIN:

requirements:

1: Shows email and password input

constraints:

AUTH:

1: Prevents user enumeration attacks

Two things matter here:

- Requirements define what must happen. For example, “Shows email and password input” means the login component must present both fields to the user.

- Constraints define what must never happen. For example, “Prevents user enumeration attacks” ensures the system never exposes whether a user exists based on login responses.

That separation is critical in AI systems, where failures usually come from violating constraints rather than missing features.

Usage in Practice

Developers reference specification IDs (ACIDs) directly in code, comments, and prompts, creating a direct line between behavior and specification. For example, a developer might tag a function with an ACID comment:

# ACID: LOGIN-1

def show_login_form():

# Show email and password input fields

...

- Push specs:

acai push --all - Install agent skills:

acai skill --install - Status lifecycle: No status → Assigned → Completed → Accepted

The result is traceability. Every line of behavior maps back to a specification. This traceability makes it usable with AI agents. The CLI allows agents to read, assign, and update requirements, as described in the quickstart guide and the original specsmaxxing post.

Key features that matter in production:

- Greppable ACID comments for agent workflows. For instance, running

grep ACIDacross your codebase gives a quick overview of where specifications are referenced. - Dashboard for progress tracking. Teams can monitor which requirements are in progress, completed, or need review.

- REST API for CI/CD integration. This lets teams connect specifications to automated build and deployment tools.

- Monorepo support for large systems, enabling specification management across multiple projects in a single repository.

The main change since the last post is how teams are using acai.sh. Instead of just documenting requirements, they are enforcing them during execution. For example, a CI pipeline might fail if a code change doesn’t reference the correct ACID, prompting immediate resolution.

impl Patterns That Are Emerging

The biggest shift since the previous article is architectural. Specs are now inserted into the execution loop, not just the design phase.

A typical pattern now looks like this:

- Specs defined in YAML files, usually version controlled alongside source code.

- ACIDs (unique specification IDs) embedded in code and prompts, establishing direct links between implementation and specs.

- CI/CD checks validate coverage, ensuring every feature or constraint in code maps to a spec.

- Runtime logs tagged with ACIDs, so production issues can be traced back to specific requirements.

- Monitoring systems detect violations by scanning for missing or broken ACID references in logs.

This approach fits with a broader industry movement toward continuous enforcement. Platforms like OneTrust are pushing real-time governance layers into production systems, moving beyond periodic audits into continuous validation as reported here.

ROI and Operational Impact

In earlier analysis, the focus was on cost reduction. The updated reality is more specific: gains come from eliminating coordination failures.

Three areas where teams are seeing measurable impact:

- Debugging time drops because issues map directly to ACIDs. For example, if a test fails, logs referencing a specific ACID let developers immediately find the related code and spec.

- There are fewer reworks due to shared interpretation of requirements. All team members refer to the same spec, so misunderstandings are reduced.

- Audit preparation becomes faster because traceability is built-in. Auditors can directly map system behaviors to documented requirements.

Situations where specification-driven enforcement does not pay off include:

- Short-lived prototypes, where the overhead of maintaining specs outweighs benefits.

- Low-risk UI changes, such as simple styling adjustments.

- Teams that do not maintain specs, leading to drift and stale documentation.

This matches broader enterprise trends. AI is expanding compliance scope from communication monitoring into decision systems themselves, which increases the need for structured enforcement as described here. To learn more about operationalizing ethical AI in practice, see Advanced Techniques for Operationalizing Ethical AI Practices.

Build vs Buy: Real Costs

Most teams face a simple decision: adopt a tool like acai.sh or build internal infrastructure.

| Approach | Time to First Value | Engineering Effort | Operational Risk |

|---|---|---|---|

| acai.sh | 2-6 weeks pilot | Low initial effort | Dependent on team discipline |

| Internal system | 3-6 months+ | High (spec parser, tracking, integrations) | High during early rollout |

| Hybrid | Variable | Moderate | Integration complexity |

A realistic cost breakdown for internal build:

- 2 engineers for 3 months equals roughly one quarter of engineering bandwidth.

- Ongoing maintenance for spec schema, dashboards, and integrations is required.

- Opportunity cost from delayed adoption, as internal builds take longer to reach production value.

Buying accelerates adoption. Building gives control but delays outcomes. For example, a team using acai.sh could start enforcing specs within weeks, while a team building internally may not see results for several months.

Limitations and Failure Modes

While the approach looks clean on paper, teams encounter friction in practice. Some of the most common issues include:

1. Spec Maintenance Overhead

Specs drift as fast as code. Every new edge case forces updates. Without discipline, specs become stale within weeks. For example, if a bug fix changes system behavior but the spec isn’t updated, the system falls out of sync.

2. Developer Resistance

Referencing ACIDs adds friction. Under deadline pressure, engineers may skip this step, breaking the traceability that the system relies on. For example, a rushed hotfix might not include proper ACID tags, making future debugging harder.

3. False Confidence

Traceability is not correctness. A wrong requirement enforced perfectly is still wrong. For instance, if a requirement is poorly defined, acai.sh will still enforce it, but the system may behave undesirably.

4. Partial Coverage

acai.sh covers behavior specification. It does not replace:

- Model monitoring (tracking AI model performance and detecting drift)

- Security controls (protecting against vulnerabilities and attacks)

- Data governance (ensuring proper data handling and compliance)

This gap matters because AI systems still drift. Even with specs, behavior can degrade over time. Recent research highlighted by MIT News points to ongoing challenges in model reliability and confidence calibration.

Alternatives and Complements

Specification-driven tooling is one layer of the technology stack. It does not replace everything else.

Common complementary approaches include:

- Monitoring tools for drift detection, which alert teams when AI behavior changes unexpectedly.

- Logging pipelines for audit storage, providing a historical record of system actions.

- Policy enforcement layers in security platforms, ensuring compliance with organizational rules.

There are also emerging alternatives. AWS’s Kiro IDE pushes specs directly into the development environment, blending specification and coding workflows as covered here. Security vendors are embedding AI-driven policy enforcement directly into infrastructure, reducing reliance on developer-managed specs.

The trade-off is control versus automation:

- Spec-driven tools give explicit control and traceability, allowing teams to see exactly what is enforced and why.

- Platform-driven tools automate enforcement but reduce visibility, as much of the process is handled behind the scenes.

Key Takeaways

- acai.sh operationalizes specification-driven development using YAML specs and ACIDs.

- The biggest change is execution: specs now run inside CI/CD and runtime systems.

- ROI comes from reducing coordination failures, not just preventing bad outputs.

- Spec maintenance and developer discipline are the biggest risks.

- This approach complements monitoring and security layers; it does not replace them.

The update from the previous post is straightforward. Specification-driven enforcement is no longer just entering the critical path. It is becoming a control layer that sits across development, AI agents, and operations.

Tools like acai.sh make that possible, but they do not make it easy. The teams that benefit are the ones willing to treat specifications as code, not documentation.

Priya Sharma

Thinks deeply about AI ethics, which some might call ironic. Has benchmarked every model, read every white-paper, and formed opinions about all of them in the time it took you to read this sentence. Passionate about responsible AI — and quietly aware that "responsible" is doing a lot of heavy lifting.