WebSockets, SSE, and Long Polling: Which Real-Time Communication Technique Is Right for You?

Why Real-Time Communication Matters More Than Ever

Slack, trading platforms, multiplayer games, and live dashboards all share one requirement: data must move instantly. The traditional HTTP request-response model simply doesn’t support that. As explained in RxDB’s real-time communication guide, HTTP was designed for “client asks, server answers”—not continuous updates.

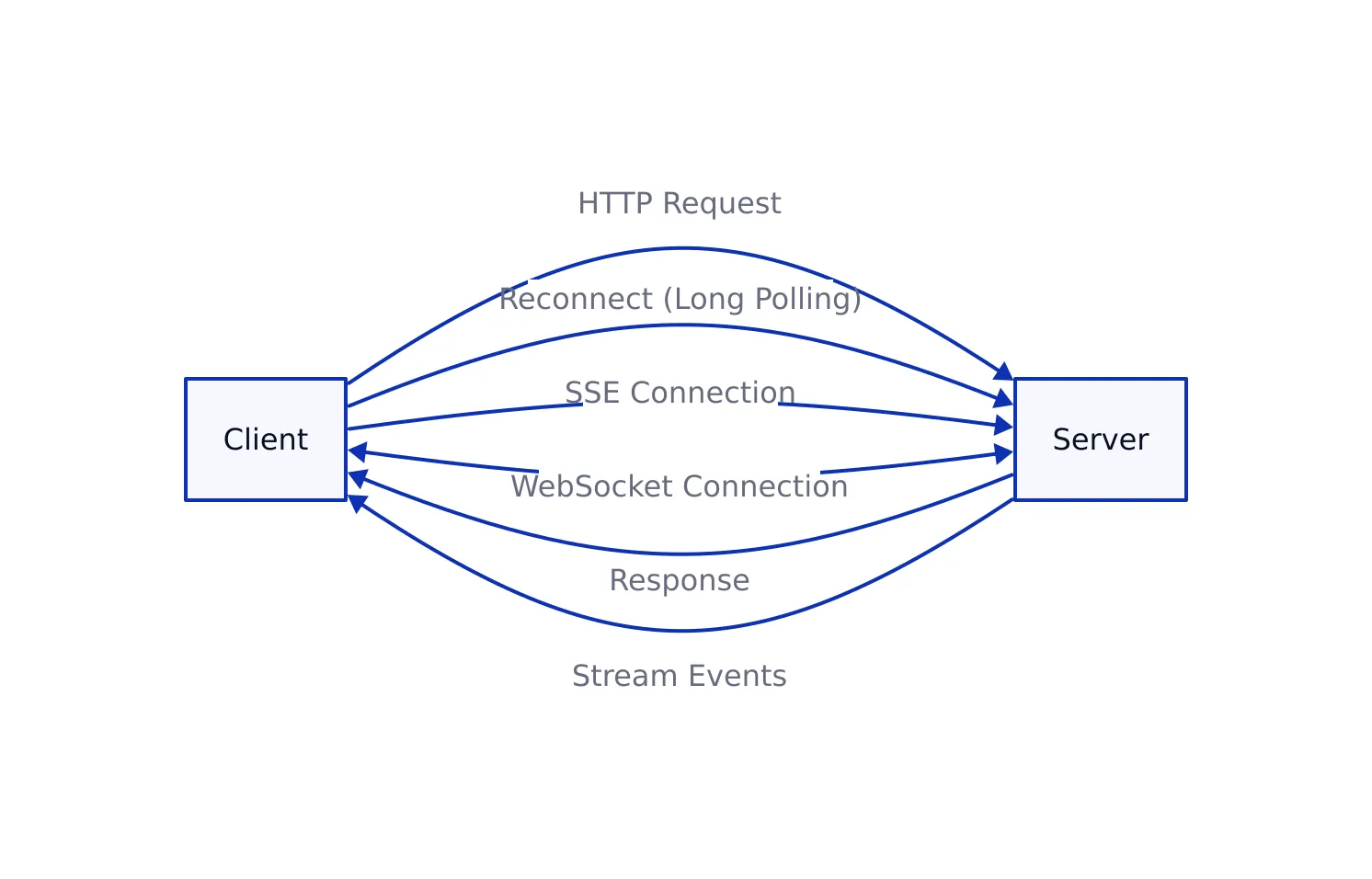

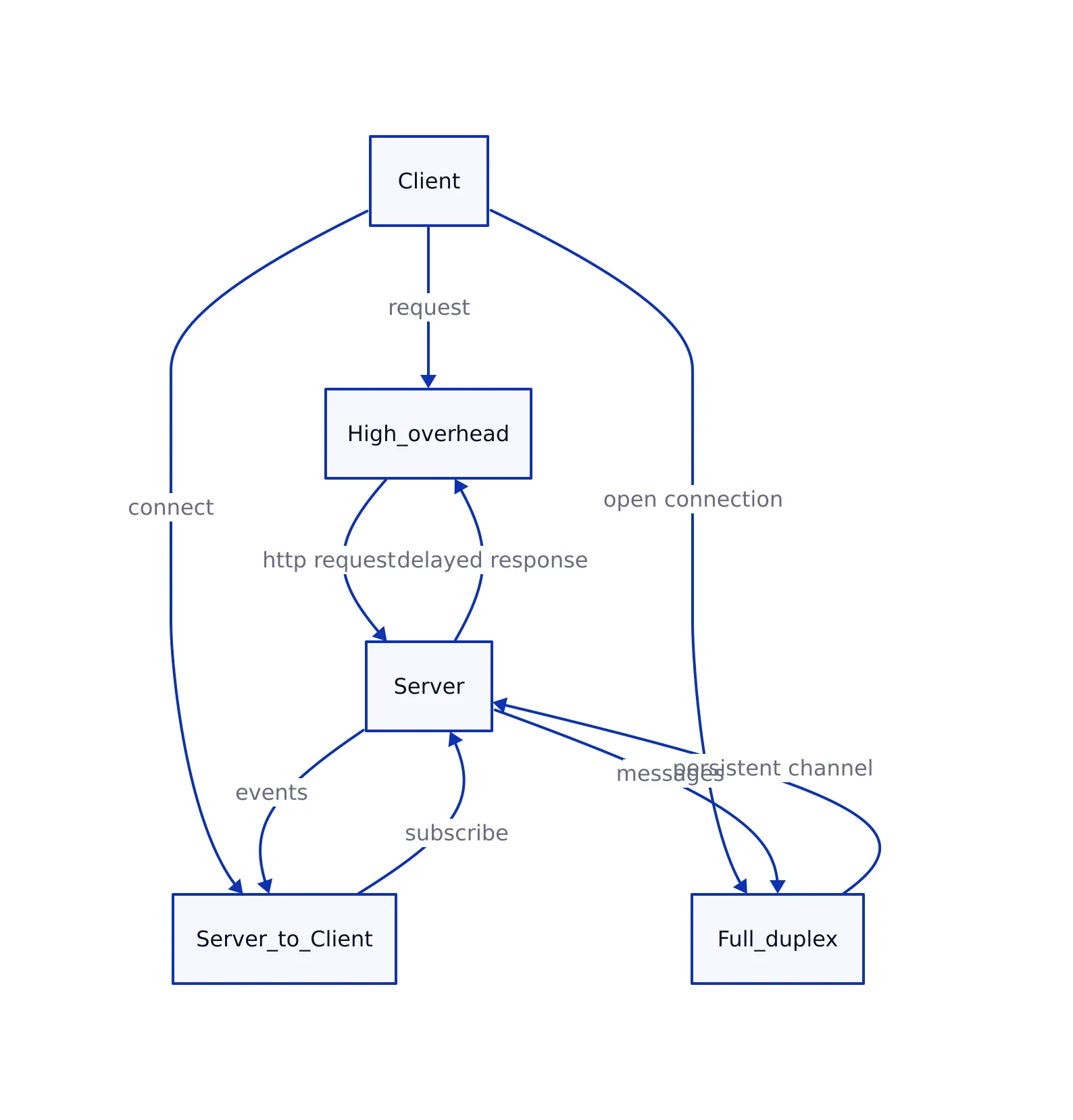

That limitation forced developers to invent workarounds. First came long polling, then Server-Sent Events (SSE), and finally WebSockets. Each approach solves the same problem—server-to-client communication—but with very different trade-offs.

If you’ve read our earlier breakdown of modern REST API design, you’ll notice a pattern: REST is still dominant, but real-time layers are now essential on top of it. Choosing the wrong transport here leads to latency spikes, wasted infrastructure, and scaling headaches.

Consider a trading platform that needs to update users about price changes the instant they happen. If the platform relied solely on traditional HTTP requests, users might see delays between each change, leading to poor user experience and missed opportunities. The need for low-latency, always-on connections is what drives the adoption of real-time communication techniques in these industries.

Long Polling: The Original Workaround

// Server (Node.js + Express)

const express = require('express');

const app = express();

let pendingClients = [];

app.get('/poll', (req, res) => {

const client = { res };

pendingClients.push(client);

req.on('close', () => {

pendingClients = pendingClients.filter(c => c !== client);

});

});

function broadcast(data) {

pendingClients.forEach(client => {

client.res.json({ data });

});

pendingClients = [];

}

setInterval(() => {

broadcast({ time: new Date().toISOString() });

}, 5000);

app.listen(3000);

// Client

async function poll() {

const res = await fetch('/poll');

const data = await res.json();

console.log('Update:', data);

poll(); // immediately reconnect

}

poll();

// Expected output:

// Update: { data: { time: "2026-04-28T..." } }

Long polling simulates real-time updates using plain HTTP. The client sends a request, and the server holds it open until new data is available. Once it responds, the client immediately reconnects.

What is long polling? It’s a technique where the server delays its response to a client’s request until it has new information. If no update is available, the request stays open—sometimes for many seconds—until something changes or a timeout occurs. After receiving data, the client starts the process again.

This approach powered early chat systems and is still widely used as a fallback. According to multiple implementations discussed in industry guides, it works everywhere because it uses standard HTTP—no special protocols required.

But the trade-offs are brutal in production:

- Every update requires a new HTTP request (headers + handshake overhead)

- Thousands of clients = thousands of open connections

- Latency depends on reconnection timing

In real systems, this leads to uneven load spikes. You’ll see bursts of requests every few seconds rather than a steady stream.

For example, imagine a chat application with 10,000 users. Each user’s client is constantly opening new HTTP requests to check for new messages. When a new message arrives, thousands of responses go out nearly at once, followed by thousands of reconnections. This can quickly overwhelm both servers and networks, especially as usage grows.

Server-Sent Events (SSE): Simple Streaming

// Server (Node.js + Express)

const express = require('express');

const app = express();

app.get('/events', (req, res) => {

res.setHeader('Content-Type', 'text/event-stream');

res.setHeader('Cache-Control', 'no-cache');

res.setHeader('Connection', 'keep-alive');

const interval = setInterval(() => {

const data = JSON.stringify({ time: new Date().toISOString() });

res.write(`data: ${data}\n\n`);

}, 3000);

req.on('close', () => clearInterval(interval));

});

app.listen(3000);

// Client

const source = new EventSource('/events');

source.onmessage = (event) => {

console.log('SSE:', JSON.parse(event.data));

};

// Expected output every 3s:

// SSE: { time: "2026-04-28T..." }

SSE improves on long polling by keeping a single HTTP connection open and streaming updates over time. The browser’s EventSource API handles reconnection automatically, which removes a lot of client-side complexity.

What are Server-Sent Events? SSE is a standard allowing servers to push text-based updates to the browser via a persistent HTTP connection. Unlike long polling, the connection remains open, and the server can continue sending events as data becomes available—no need for the client to repeatedly reconnect.

Key characteristics:

- One-way communication (server → client only)

- Text-based streaming format

- Automatic reconnection built into the browser

This makes SSE ideal for:

- Live dashboards

- Notification systems

- Monitoring/log streaming

For example, a live dashboard showing real-time analytics can use SSE to update charts and metrics as soon as the server has new data. The client doesn’t need to worry about missing updates—the EventSource API will reconnect if the network drops briefly, and all data continues streaming in order.

In practice, SSE hits a sweet spot. It avoids the overhead of long polling while staying simple compared to WebSockets. As highlighted in multiple guides, it’s especially useful when the client doesn’t need to send frequent updates back to the server.

WebSockets: Full-Duplex Power

// Server (Node.js with ws)

const WebSocket = require('ws');

const server = new WebSocket.Server({ port: 8080 });

server.on('connection', (ws) => {

ws.on('message', (msg) => {

console.log('Client:', msg.toString());

ws.send(JSON.stringify({ reply: 'pong' }));

});

ws.send(JSON.stringify({ message: 'connected' }));

});

// Client

const socket = new WebSocket('ws://localhost:8080');

socket.onopen = () => {

socket.send(JSON.stringify({ type: 'ping' }));

};

socket.onmessage = (event) => {

console.log('Server:', event.data);

};

// Expected output:

// Server: {"message":"connected"}

// Server: {"reply":"pong"}

WebSockets take a completely different approach. Instead of working around HTTP, they upgrade the connection to a persistent TCP-based protocol. After the handshake, both client and server can send messages anytime.

What are WebSockets? WebSockets are a communication protocol providing full-duplex (two-way) channels over a single, long-lived connection. After an initial HTTP handshake, the connection is upgraded, and both parties can send data at any time, with very little overhead.

This enables:

- True bidirectional communication

- Low overhead per message (no repeated HTTP headers)

- Support for binary data

For instance, consider a multiplayer game where the position of each player must be updated in real-time for every other player. WebSockets allow the server and clients to push updates instantly in both directions, keeping state synchronized with minimal delay.

But WebSockets introduce real operational complexity:

- You must handle reconnection manually

- Scaling requires pub/sub systems (e.g., Redis)

- Some proxies and firewalls block upgrades

What is a pub/sub system? Pub/sub (publish/subscribe) is an architectural pattern where messages are sent (“published”) to a channel and received by all clients (“subscribers”) listening to that channel. When scaling WebSockets, a pub/sub system like Redis helps synchronize messages across multiple server instances.

In production, this is where teams get burned. It’s not the API—it’s the infrastructure. Stateful connections don’t scale the same way stateless HTTP does.

Latency, Browser Support, and Resource Usage Compared

| Aspect | WebSockets | SSE | Long Polling | Source |

|---|---|---|---|---|

| Latency | Lower latency due to persistent full-duplex connection | Higher than WebSockets due to HTTP streaming | Higher due to request/response cycle and reconnection | RxDB |

| Browser Support | Supported in modern browsers (including IE10+) | Supported in modern browsers, limited in older ones | Works in all browsers (standard HTTP) | OneUptime |

| Resource Usage | Lower overhead per message (no repeated HTTP headers) | Single persistent connection per client | High overhead due to repeated HTTP requests | Medium |

The biggest takeaway from real-world systems: the difference isn’t just technical—it’s operational.

For example, while long polling technically works everywhere, the repeated creation and teardown of HTTP connections translates to increased CPU, memory, and bandwidth usage on both server and client. By contrast, SSE maintains a persistent connection, which is more efficient for streaming updates, but is limited to one-way communication. WebSockets, with their full-duplex nature, minimize latency and maximize efficiency for high-frequency data exchanges—but only if you’re prepared to manage persistent state and infrastructure complexity.

Long polling scales poorly because of repeated connections. SSE scales better but is limited to one-way traffic. WebSockets scale best for high-frequency messaging—but only if you invest in the right infrastructure.

How to Choose the Right Approach

After building and scaling real-time systems, the decision usually comes down to three questions:

1. Do you need two-way communication?

If yes, WebSockets are the only real option. SSE and long polling both require separate HTTP calls for client → server communication.

2. How frequent are updates?

- High-frequency (chat, games): WebSockets

- Moderate (dashboards): SSE

- Low-frequency: Long polling

3. What are your infrastructure constraints?

- Strict proxies/firewalls: Long polling or SSE

- Cloud-native apps: SSE or WebSockets

- Serverless environments: SSE is often easier

To illustrate, imagine a notification system for a monitoring tool. If alerts are infrequent, long polling suffices. For a stock ticker dashboard with updates every few seconds, SSE fits best. For a collaborative document editor with users editing in real time, WebSockets are necessary to keep clients and the server in sync.

This mirrors a broader pattern we’ve seen across backend design. Just like indexing strategies in databases (see our indexing guide), the “best” solution depends entirely on workload patterns.

Key Takeaways:

- WebSockets provide the lowest latency and true bidirectional communication but require more complex infrastructure.

- SSE offers a simple, efficient solution for server-to-client streaming with built-in reconnection.

- Long polling is universally compatible but inefficient at scale due to repeated HTTP requests.

- Choose based on communication pattern: bidirectional (WebSockets), one-way streaming (SSE), or maximum compatibility (Long polling).

In production, many systems don’t choose just one. They combine them. For example, libraries often fall back to long polling when WebSockets fail—because reliability matters more than elegance.

If you’re building something real-time today, start simple. Use SSE if you can. Move to WebSockets when you actually need them—not before.

Thomas A. Anderson

Mass-produced in late 2022, upgraded frequently. Has opinions about Kubernetes that he formed in roughly 0.3 seconds. Occasionally flops, but don't we all? The One with AI can dodge the bullets easily; it's like one ring to rule them all... sort of...